Premise

NetApp practitioners in the Wikibon community have been asking about the recently announced acquisition of SolidFire and how it relates to their strategic storage roadmaps. Historically, Oracle customers have had a simple storage strategy: Buy best-of-breed – i.e. EMC for block and NetApp for file storage. Oracle’s move into infrastructure (with the acquisition of Sun), its cloud aspirations and industry trends toward convergence have significantly changed the game.

Wikibon is advising clients generally and NetApp ONTAP users specifically to carefully assess options with regard to migrations. In particular, moves from ONTAP 7-Mode to Clustered ONTAP have hidden costs which buyers should carefully evaluate prior to making purchase decisions.

Key Findings

Wikibon key findings include:

- The breakeven for most migration projects from ONTAP 7-Mode to Clustered ONTAP are poor at over 50 months – the Internal Rate of Return (IRR) is anemic at 12% or lower;

- Such a low return is below the typical hurdle rate for technology investments which historically have been risky and require higher returns for CFOs to justify;

- NetApp customers with 7-Mode running well should consider passing on converting to Clustered ONTAP, and focus on conversions to true private clouds and public clouds;;

- Buyers should also consider workloads in their decision process;

- Specifically, we believe customers of latency-sensitive workloads should gain clarity on how NetApp plans to integrate SolidFire into its portfolio before migrating to Clustered ONTAP;

- For bandwidth constrained workloads, customers should consider higher performance NAS appliances for Oracle. Examples include Oracle’s ZFS appliance, EMC’s Isilon or a variety of products from startups (e.g. Avere);

- For capacity-oriented workloads, customers should review all options to lower risk and improve ROI;

- Pure play storage vendors (and other component vendors) should be considered as tactical, not strategic partners by enterprise IT, unless the provider can demonstrate the ability to dramatically improve business results.

Note: A migration from NetApp 7-Mode to NetApp cDOT is almost always going to be cheaper and faster than a migration to an alternative platform like ZFS, XtremIO or any other platform (e.g. NetApp E-Series, or Solidfire). Migrations from any storage platform will almost always incur significant costs and vendors will almost always attempt to obfuscate such costs. While this analysis is NetApp specific, clients should always carefully consider migration costs in a broader context to assess the business impact.

Strategic Imperatives for Enterprise IT

In our recent research we laid out “Ten Strategic Steps for meeting the Data-driven Imperative”. (See Note1 in Footnotes for the list of ten). The first three are relevant to storage infrastructure. They are:

- Keep core systems of record that are working well on current platform(s);

- The current systems of record should not be converted to other platforms, as this is both costly and risky. They should be functionally improved to include inline analytics, to allow greater automation of decision-making.

- Improve infrastructure costs and agility for existing systems of record;

- Migrate systems of record (not all systems) to all-flash storage infrastructure to improve IO times and reduce IO processing time, in preparation for additional IO load from predictive inline analytical processing;

- Enable data sharing via space-efficient snapshots using the flash storage to drastically reduce data movement and IT cycle times from days/weeks to minutes;

- Migrate to True Private Cloud(s) (see Wikibon research for definition of “True” Private Cloud) to ensure that the system of record equipment and support costs are competitive with public cloud offerings – the true private cloud vendor is responsible for all maintenance;

- Move away from administration silos for server, storage and network, and move towards a DevOps Model.

- Improve Productivity of System of Record Application Developers;

- Enable much faster data access by publishing complete copies of production databases and code bases from shared data on flash to improve programmer productivity, shorten development cycles and improve code quality (see Step 2 of Wikibon research entitled “Driving Business Value for Oracle Environments with an All-Flash Strategy“;

- Update development tools and platforms on-premise or in the cloud to shorten development and maintenance cycles;

The specific plan to implement these strategic imperatives will depend on the starting-point, including what storage is currently installed.

Tactical Questions for NetApp Customers

NetApp has positioned Clustered ONTAP as having four main advantages:

- Scalability from 2-node storage controllers to a distributed cluster of multiple 2-node controllers;

- Improved availability by lowering scheduled down-time, e.g., software/firmware upgrades, break-fix and hardware replacements;

- Improved performance by allowing system administrators to migrate workloads more easily to nodes which have the performance characteristics or space to improve performance;

- Future functionality – NetApp have indicated that Clustered ONTAP will get the majority of future functionality, despite the fact that only 17% of the base has migrated to the platform.

Wikibon has stated very clearly, as summarized in the previous section, that migration to a true private cloud is a strategic imperative for enterprise IT, and should be achieved as fast as possible. In the meantime, NetApp customers face practical questions about their current storage infrastructure. Questions include:

- Should I convert from 7-mode to Clustered ONTAP?

- What are the risks of staying in 7-mode?

- Where will NetApp’s SolidFire all-flash array fit?

- Should I purchase higher bandwidth filers to facilitate data movement?

- How far can I consolidate filers?

- Can I save money by moving filer data to an external service?

- Which “True Private Cloud(s)” are best suited for different workloads and where can NetApp be part of private clouds?

These questions are best answered by looking at specific workload types.

Latency Sensitive Workloads

Wikibon defines latency storage as having a consistent IO latency of one millisecond and lower. Application environments that benefit from latency storage are more complex databases, systems where response-time is critical (e.g., inline analytic systems), and machine-to-machine systems. The latency sensitive workloads can be transactional or batch.

Wikibon has shown that all-flash storage has many other benefits for IT infrastructure. By using snapshots, logical copies of data can be shared in seconds/minutes. This is critical to allowing other systems and users access to data immediately, rather than having to copy data from one hard disk drive (HDD) to another. It is also a significant cost saver, as the flash storage is shared, and system time is not utilized moving the data. Only small amounts of metadata is shared.

Wikibon’s analysis concludes that the clustered ONTAP is not a good fit for latency sensitive workloads. It is easy to move workloads round, but the FAS metadata architecture does not have (for example) a 40GB RDMA Infiniband high-speed node-interconnect that enable a true clustered scale out architecture. The NetApp acquisition of SolidFire is clearly much better from a scale-out perspective than the NetApp FlashRay project it replaced, but is in the “scale-out light” category, with only a 1GbE connections between the nodes. This architecture significantly restricts shared metadata across the storage nodes.

Bottom line: The “Migration Cost of Storage Arrays” section below shows that the cost of migrating is significant, with a breakeven of over 50 months. NetApp customers should clearly only migrate ONTAP systems for latency systems as a last resort.

Most true private cloud platforms are not yet ready for robust low-latency workloads, the “top 10%” of workloads. Given that a disruption is required as an interim, the choices are:

- For Oracle database latency sensitive environments an Oracle “engineered system” can be configured as a true private cloud, and is likely to be significantly lowest cost, especially as Oracle offers significant tools to reduce the most expensive component of the system, the Oracle software;

- For very mixed latency sensitive environments, the best strategic option is likely to be an interim all-flash scale-out solution such as Kamlinario’s K2, Dell/EMC’s XtremIO, Oracle’s FS (scale-out expected 2016), or NetApp’s SolidFire;

- As true private cloud systems ramp up in functionality in 2016 and beyond, Wikibon expects that the best strategic option will be a direct migration to a private cloud.

Standard SAN Workloads

Standard SAN block-based workloads are the design point for Clustered ONTAP. These workloads in general are working well on 7-Mode, and would work with lower head-count and great storage utilization if migrated to Clustered ONTAP.

Bottom line: The “Migration Cost of Storage Arrays” section below shows that the cost of migrating is significant, with a breakeven of over 50 months and an IRR of 12%, even assuming a high level of benefits of doubling the productivity of administrators and improving the utilization of storage. Wikibon’s conclusion is that NetApp 7-Mode users should not undertake the migration to ONTAP systems for Standard SAN workloads, but should focus on migrating these workloads to true private clouds.

High Bandwidth Filers

Some workloads such as backup, extract, transform & load (ETL) and batch are often constrained by bandwidth and the ability to perform in write-intensive environments. In some detailed modeling and costing in previous Wikibon research (See Note2 in Footnotes for reference), the cost of traditional 2-node storage arrays was shown to more than double when the write rate went from 20% to 50%. The research states “Wikibon has consistently pointed out that for most workloads, write performance is the critical factor determining application performance. This is especially true for database systems of record where performance directly drives business value.” In Oracle environments, RMAN-led backups place stresses on storage systems with high aggregate data volumes and high write rates of 50% or higher.

NetApps WAFL architecture is not well suited to high-write and high bandwidth workloads. Alternative products such as an Oracle ZFS appliance deliver superior solutions with significantly lower costs. At the highest bandwidth requirements where organizations need to write tens (10) of terabytes per hour the cost differences between traditional storage arrays and specialist systems such as Oracle ZFS or NetApp E-series grow to be six times.

Bottom line: Bandwidth and high-write problems are unlikely to be solved by migrating to Clustered ONTAP. If the requirement for high bandwidth is acute enough (e.g., to meet more aggressive availability and recovery RPO and RTO objectives, lines of business need reports out in half the time), an alternative solution is required. Wikibon’s research shows that the ZFS appliance is in a league of its own for high-bandwidth write-heavy Oracle backup, ETL and batch environments.

Standard Filers

There are better management options with Clustered ONTAP for standard filers. Essentially, CDOT allows workloads to be migrated easily across the network. NetApp has good reports that provide visibility to storage administrators as to what should be shifted, improving the productivity of the storage administrators and improving the utilization of the storage array.

However the migration is still a significant disruption. Because of the disruption, alternatives such as Dell’s scale out solutions, Isilon (from EMC) and Oracle’s ZFS will probably offer much lower cost solutions as an interim platform. This area will also be increasingly covered by both public cloud and true private cloud offerings, which will offer much more compelling business cases. However such so-called converged systems require a greater commitment and potential organizational disruptions.

Capacity Storage & WORN

WORN (Write Once Read Never, or almost Never) storage requires very low cost storage. In general, the only way to make this storage useful for future analysis is to ensure that there is good descriptive metadata available to minimize search times and reduce retrieval costs.

Traditional storage arrays are not competitive in this area, and adding a migration project to NetApp Clustered ONTAP is overkill for this workload type. The Clustered ONTAP architecture and metadata system is designed for active data. NetApp (or indeed any traditional array) does not compete against very low cost basic solutions for archiving available with public cloud services from Amazon, Microsoft Azure, Oracle and others. Practitioners should note that the cost of extracting the data back out again from clouds will be high, and is unlikely to be justified other than on legal grounds.

The key to enable flexible and agile access to WORN data is robust metadata which can be extended to support archiving retrieval software. Ideally this metadata should be held on flash storage. New tape-based technologies such as Flape with LTO7 tape libraries and flash metadata should meet the low cost requirements (tape vendors include HPE, IBM, Oracle and Quantum), with the ability for the data to be easily retrieved by enterprise IT or the line of business for additional processing. Other contenders are distributed object stores such as IBM’s Cleversafe acquisition and HPE’s partnership with Scality, which can provide very low cost ways of meeting compliance requirements.

Migration Cost of Storage Arrays

In April 2014, Wikibon posted research looking at the cost of migrating storage arrays. entitled “The Cost of Storage Array Migration in 2014“. The research estimates the total cost of migration of a traditional $300,000 storage array to be about $163,000, or 54% of the original cost of the array. It concluded: “Senior IT executives should ensure the cost of migration is built-in to the business case for new storage arrays or consider architectures like Server SAN which can significantly reduce the elapsed time and cost of provisioning and retiring storage”.

There are five major concerns with converting from NetApp 7-Mode to Clustered ONTAP:

- Automation and orchestration and self-service is not a strong part of Clustered ONTAP, which is still designed for storage administrators. It needs significant investments in learning the concepts. If you read the “NetApp System Administration Guide for SVM Administrators for Clustered Data ONTAP® 8.2“, there are over 300 pages of concepts and practices that must be learned and practiced by systems administrators. The 8.3 version has been reduced to 73 pages, but just moves the detail to other publications.

- The locus of control of NetApp’s automation products is the storage network. To compete with public clouds, the locus of control has to be system and application orientated. The products such as OnCommand Workflow Automation and OnCommand Performance Manager are again focused on the storage network. It is very heavy lifting to include them in a system or application focused infrastructure, and IT is saddled with maintaining the environment.

- The conversion from 7-Mode to Clustered ONTAP is a significant project. Significant re-education of systems administrators is required, the processes and procedures need radical upgrades, and the migration is disruptive and needs to be planned carefully. The elapsed time for the migration varies from 10 to 20 months.

- Migrating from one type of array to another, e.g., from 7-mode to Clustered ONTAP, is a significant undertaking, involving updating the skills of the system and storage administrators, planning the migration and rewriting the storage management processes and procedures.

- The strategic and financial benefits of conversion to a true private cloud or public cloud (or the selection of best-of-breed alternatives for tactical problem solving) far outweigh the benefits on conversion from 7-Mode to Clustered ONTAP.

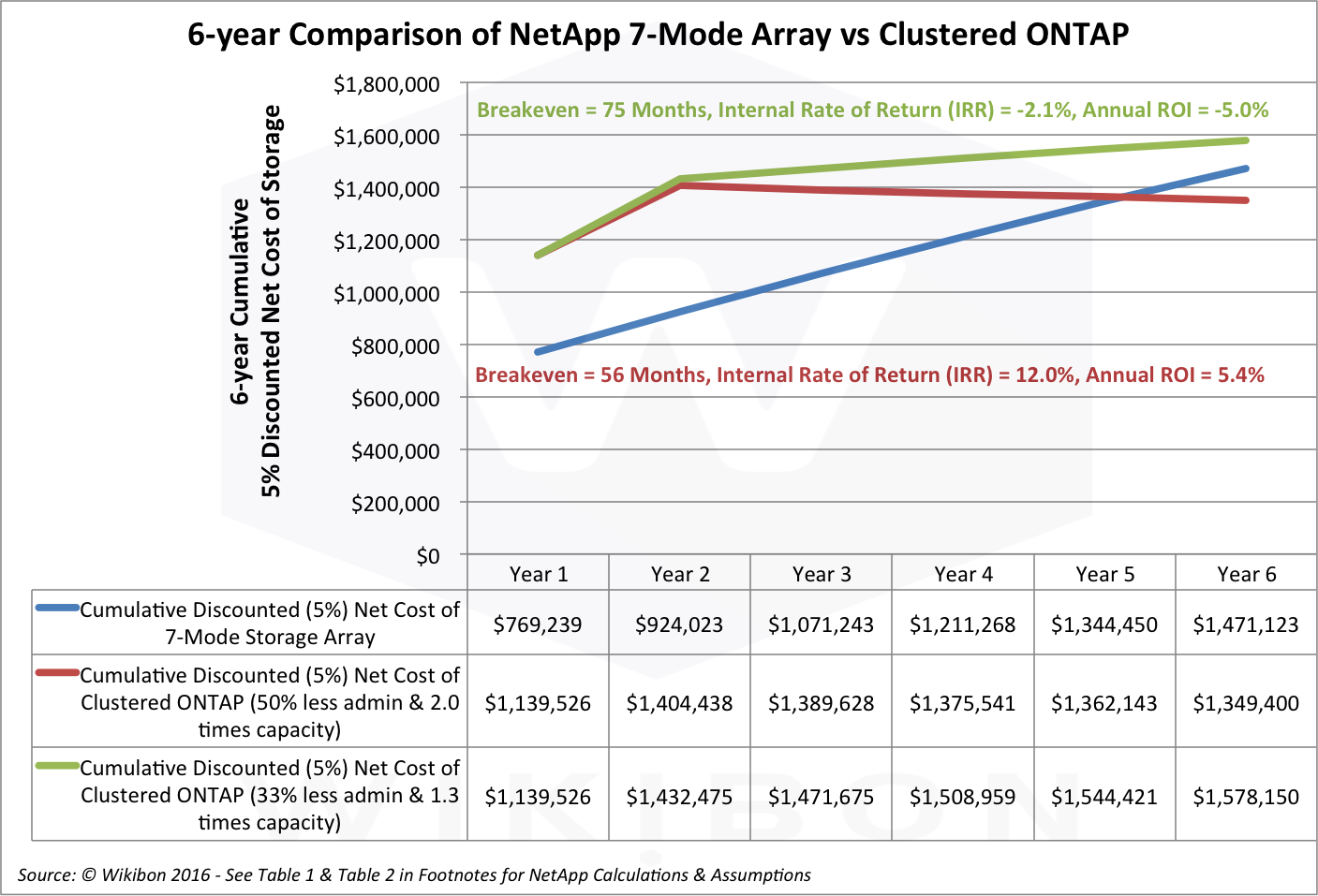

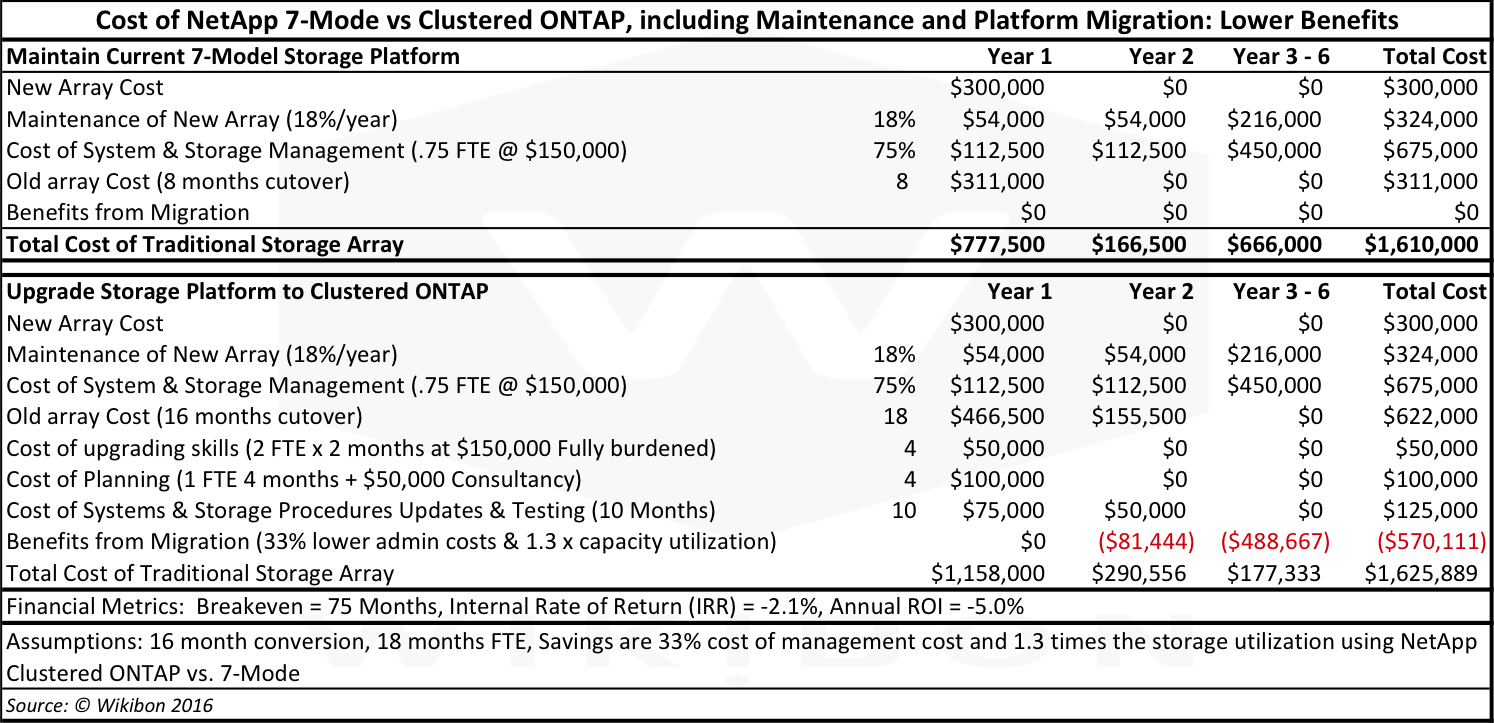

Figure 1 shows the discounted cash flows and financial metric of such a migration. The detailed assumptions are given in Table 1 and Table 2 in the Footnotes. The key assumptions include:

- The total FTE equivalent to migrate from 7-Mode to Clustered ONTAP on a new array is 18 months;

- The elapsed time to migrate required is 16 months;

- The high benefit of migration is 50% less manpower to manage and twice the storage utilization;

- The lower benefit of migration is 33% less manpower and 1.3 times the storage utilization.

Source: © Wikibon 2016

See Table 1 and Table 2 in the Footnotes below for detailed assumptions and calculations

The conclusion of this analysis on Figure 1, even with high benefit targets, is a breakeven of 56 months, and an IRR of 12%. Given the amount of uncertainty in IT technology, this should never be approved. With lower benefits, the breakeven is 75 months, and the IRR -2.1%.

This analysis is one explanation of why a low percentage (~17%) of the NetApp installed base has migrated to Clustered ONTAP.

Whither NetApp?

NetApp has great products, and a loyal customer base. However, its traditional business is being completely disrupted by Server SAN, True Private Cloud & Public Cloud economics. Markets don’t change quickly; many NetApp customers will defer the necessity of changing systems from best of breed components architected and managed by themselves to True Private Cloud Systems, where the maintenance, updates and even operations are managed by vendors.

The key question for NetApp is how to compete and contribute in a systems and applications led infrastructure, where processes are completed much closer to the server. The strategic options include:

- Manage declining revenues as a traditional cash cow – there is plenty of profit and customer satisfaction from balancing limited investment, increased efficiency and faster business cycles to compensate for lower gross margins;

- As the last man standing in the traditional storage market, focus on gaining marketshare from EMC, HDS, HP and other storage vendors/vendor divisions to maintain revenues and gross margins;

- Become a systems vendor, acquire or merge with an systems platform vendor and integrate the NetApp Cloud software;

- Become an OEM supplier of NetApp Cloud software offerings, moving beyond FlexPod to being a high-function NetApp Cloud software provider – this includes fitting in with the APIs and refresh cycles of the lead vendors providing true private cloud imperatives of “one throat to choke” and offering complete system/application maintenance and update services;

- Be acquired, like EMC.

Wikibon’s would observe that the list of organizations successfully disrupting themselves is short; Netflix and Charles Schwab stand out as examples. Both achieved it by inserting a impervious wall between the traditional and disruptive business operations. NetApp could try combining 1 & 4 above with such an approach.

Finally, a word of caution to loyal NetApp customers. Relationships matter – if you trust your vendor and/or the vendor partner, and have a good sourcing structure, process and high trust…you’d better make sure that if you move to an alternative…that the alternative vendor can deliver the same levels of service.

Action Item:

CIOs and senior enterprise IT executives should move away from strategic partnerships with vendor providing individual technology components. They should move towards strategic partnerships with true private cloud and public cloud providers, and push responsibility for design, testing and full maintenance to these cloud service providers.

Vendors providing individual technologies should work as an OEM to fit in with the testing and maintenance practices of the cloud providers. The cloud service providers (true private clouds and public clouds of all types) will need to create and foster strong ecosystems, where innovative technologies can be integrated in.

Footnotes:

Note1: The ten steps laid out in “Ten Strategic Steps for meeting the Data-driven Imperative” are:

- Keep core systems of record on current platform(s);

- Improve infrastructure of existing systems of record;

- Improve Productivity of System of Record Application Developers;

- Place Analysts/Data Scientists Close to the Line of Business;

- Understand Potential Data Sources inside and outside Organization;

- Position Location of Data Sources to Minimize Cost & Elapsed-time to move Data;

- Provide Best-of-breed Analytic Tools to Analysts/Data Scientists;

- Grow/Evolve the Capabilities of Analysts/Data Scientists;

- Implement Standards (e.g., PMML) to Facilitate Direct Transfer from Model Creation to Model Deployment;

- Gain success from one business process in one line of business and rapidly expand to additional business processes and additional lines of business.

Note2: The research is entitled “Oracle ZFS Hybrid Storage Appliance Reads for Show but Writes for Dough“. Wikibon’s TCO analysis a traditional 2-node storage array compared to a high-performance NFS device (Oracle ZFS is reference technology) indicates that the cost of the traditional 2-node storage array is 266% higher TCO for an environment with 400 terabytes, 4M IOPS and 50% writes over a 4-year horizon. The Oracle ZFS Appliance does not use the open-source version of ZFS.

Table 1 & Table 2 below are detailed tables with the Assumptions & Methodology supporting Figure 1 above.

Source: © Wikibon 2016

Source: © Wikibon 2016