Application performance management (APM) has been around since the days of the mainframe. As systems architectures became more complex, the technology evolved to accommodate client server, Web tier architectures, mobile and now cloud-based systems. A spate of new vendors emerged to solve the sticky problems associated with ensuring consistent and predictable user experiences. The market has grown and is approaching $5B globally and growing at at 10% CAGR, with a variety of established companies and new entrants attacking the space.

In this week’s Wikibon CUBE Insights Powered by ETR, we welcome back and thank Erik Bradley for his collaboration and contribution to this research. Erik is the Chief Engagement Strategist at Aptiviti, the holding company of our data partner ETR.

Input from Practitioners

ETR just recently hosted an ETR VENN session on this topic, which is roundtable that is exclusively open to ETR’s clients that we sometimes summarize in our Breaking Analysis segments.

By way of background, the APM market dates back to the mainframe era. In monolithic systems, traces and logs were adequate to identify and remediate application performance issues. But as systems became increasingly distributed, understanding root cause problems was more challenging. Cloud, containers, microservices, real-time data flows, serverless and mobile apps complicate the challenges by an order of magnitude.

Additionally, data in today’s distributed architectures is fragmented. The user data is separate from the application data and is separate from the infrastructure data and often, organizations don’t have a full picture of the information they need to ensure consistent and predictable performance. This leads to remediation times and user experiences that are problematic in a digital world.

To this point, digital transformation is driving the need for a more holistic view (a systems view) of relevant data. Customers from the ETR panel stressed the need for a single pane of glass and their willingness to pay for value. For these reasons, the panel believes that the APM market will continue to see steady growth as a foundation for digital business.

Here is Erik Bradley’s take on the APM market.

Positioning Some the Leaders in APM

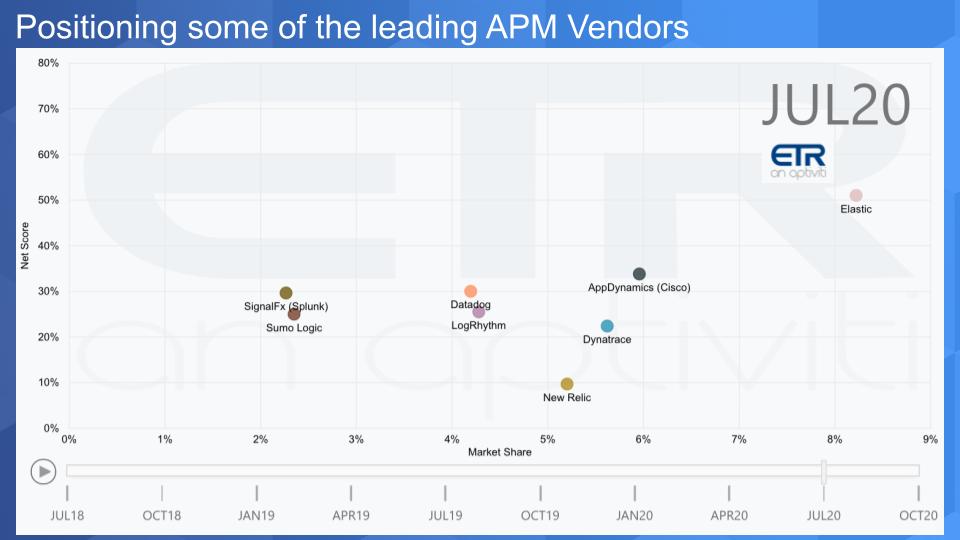

The chart below some of the leading APM vendors in the space. The vertical axis shows Net Score, which is ETR’s measure of spending velocity. The horizontal axis is Market Share and is a measure of the pervasiveness of the vendor within the survey, based on mentions (not spend levels). Note this data is from the July survey – October is currently in the field.

The following key points are noteworthy:

- On balance, relative to other sectors, the Net Scores of the APM vendors are tepid.

- The one exception is Elastic. The expert practitioners on the panel see Elastic as an open source alternative at half the price of traditional logging solutions. Elastic is part of the “Elk Stack” (Elasticsearch, Logstash, and Kibana).

- The AppDynamics acquisition by Cisco appears to be paying dividends as the company consistently cites this as a growth business on its earnings calls.

- Dynatrace appears to be leading, especially in large organizations. Clearly a leader, the panelists were mixed on Dynatrace. On the one hand they felt as though the company had a lead position in machine learning and proactive monitoring; as well as a great roadmap. However one panelist felt Dynatrace was resting on its laurels from a product standpoint.

- DataDog is seen as a viable and less expensive logging platform relative to Splunk. It’s valuation is lofty and we’ll discuss that below.

- Splunk’s acquisition of SignalFx fills a hole in monitoring for the company. As well it gives Splunk a stronger subscription play as the company transitions to an annual recurring revenue (ARR) model. The panel, however, was critical of Splunk’s pricing / cost and felt they would be under pressure from cheaper alternatives, including open source tools.

- Sumo Logic just recently completed its IPO and is another competitor to Splunk.

- New Relic’s Net Score is disappointing and reflects some of the challenges the company is facing. New Relic was perhaps the most discussed vendor on the panel. The company is seen as a leader with excellent technology, however Elliott Management has taken a position in the company and will likely become active in trying to restructure the firm in an attempt to unlock value.

Note: Broadcom is also prominent in this space with the acquisition of CA, however they did not come up in the panel and ETR does not have sufficient data in the model to compare them with those listed above.

We also discussed the cloud vendors, which offer their own monitoring tools within their respective clouds. Generally the panelists believe, and we agree, that while these tools are useful, they are not a massive competitive threat to the APM pure plays. We feel this way because the APM vendors support multiple clouds as well as on-prem and most customers want optionality and visibility across clouds. Unless a customer is only using a single cloud (which is not the norm), we believe that cloud-based monitoring tools will be more complementary than competitive.

There are two new entrants to the space we touched upon, Honeycomb and Observe as two companies to watch.

Honeycomb is a live debugger for systems in production. It picks up where monitoring leaves off. Its co-founder and CTO, Charity Majors coined the observability as it relates to APM.

Observe is a company headed by Jeremy Burton and backed by elite Silicon Valley investors. The company uses Snowflake in the backend of its SaaS product to create end-to-end visibility and reduce the time to remediation.

Here’s a cliplist of Erik Bradley’s commentary on this data set.

Valuations of Selected APM Players

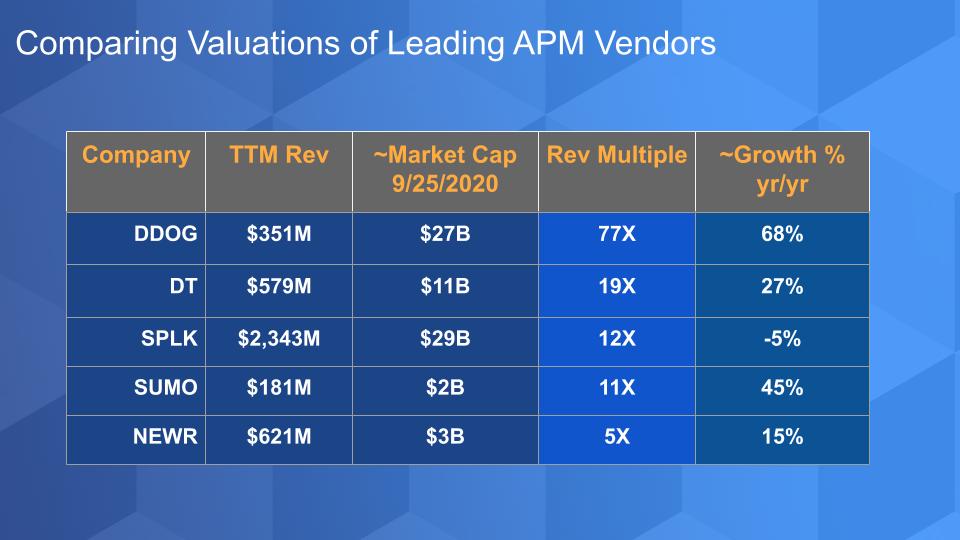

The chart below maps the trailing twelve month revenue (TTM), market capitalization as of 9/252/2020, the revenue multiple off the TTM and the year on year growth as reported by the companies for the most recent quarter.

DataDog’s valuation jumps out on this chart because of the proximity to Splunk, a main competitor with 6X its revenue. It’s also comparable to Snowflake’s pre-IPO value with a growth rate that is 5,300 basis points lower. At this elevated valuation, we would like to see stronger spending momentum in the ETR survey. Let’s wait and see what the October data set shows before we make too much of the somewhat lower Net Score levels.

The valuation gap between Dynatrace and DataDog is notable, even though they are not direct competitors, there are overlaps and it’s instructive to see the premium the street pays for growth. This means Data Dog is priced to very tight tolerances and must continue to beat expectations and grow into its value.

Splunk had rallied nicely off its March lows but is off nearly 20% from it’s September 1st high, down more sharply than the major averages. The panelists were critical of Splunk’s pricing and competitive outlook but we believe Splunk has some advantages, despite its recent revenue decline. Splunk has a loyal customer base with teams of people trained with Splunk expertise. The company is transitioning to an ARR model which is pressuring its income statement. We’re not overly concerned about this transition as we’ve seen other companies (e.g. Adobe and Tableau) successfully navigate through similar changes. The SignalFx acquisition accelerates Splunk’s subscription model and the company is increasingly moving into analytics spaces where it contributes to data pipelines within organizations. While competition is eyeing Splunk, we’ve heard about the next “Splunk Killer” for years.

New Relic is of somewhat greater concern. It has solid revenue, a decent growth rate, beat expectations last quarter but it appears to be in turmoil. The company guided with uncertainty due to a variety of reconciliation and other factors, including a new pricing model and a free tier that it expects to be popular. And this is reflected in its valuation, which is trailing its peers quite notably. Moreover it’s Net Score (spending momentum) in the ETR surveys has been decelerating since COVID hit. We’ll see what happens in the October survey but we expect Elliott Management to play a more active role.

On the plus side, we believe, and the VENN panel confirmed this, that New Relic has solid technology. It’s NRDB is purpose-built for APM and it is well-regarded in the industry. As well, a number of the panelists indicated that New Relic has a solid roadmap. Active investor involvement, while painful for management, can often result in the creation of upside value.

Erik Bradley comments on the valuations of the APM vendors, including the merger and acquisition outlook.

VENN Panel Verbatim Comments

ETR’s VENN panel tapped the expertise of three practitioners:

- The CTO of a global travel and hospitality enterprise

- A solutions architect for a global financial services firm

- The Chief Architect at a large health care services organization

Here are some of their comments.

I expect steady growth in this market for the next ten years.

The industry is ripe for consolidation.

Regarding Dynatrace…their usage of machine learning to enable that proactive maintenance of your platform is good.

Splunk is going to be under enormous pressure to justify the cost for its logging capabilities. Elastic is a very viable alternative and it’s half the price

Grafana, and Elk, and opensource Kafka are streaming logs and eventing in real time, so why do I need to spend multi millions on software packages?

We used to be a huge Datadog customer, but the large bills made us start using more Elastic, Kibana, and other opensource technologies.

I wouldn’t be surprised if there was some consolidation by the big cloud players. Some of these players will be taken off the market in the next 18-24 months.

Splunk didn’t have a really good APM tool, but did a lot of logging and monitoring, and that’s why they acquired SignalFx so now they have an end-to-end solution. But SignalFx doesn’t really have any frontend monitoring capabilities so they really need to make some investment there.

New Relic and Datadog also have logging capabilities to compete with Splunk. And Dynatrace does a little bit of both. Now you have like all these people trying to claim that they have end-to-end solutions to track user experience, monitor and manage your applications, and give you visibility into your infrastructure and provide logging. I think what distinguishes each one of these players is their ability to be deployed within your specific environment.

New Relic is trying to target the small, medium, and startup companies. We have recently gone through a New Relic purchase, and those guys have been pretty aggressive on the discounts for enterprise customers. The free model is really there to create a moat around getting small to medium-sized companies onboarded and then having them grow into a larger license based on value propositions that the company creates.

I will say that New Relic’s technology is first rate. They do an amazing job monitoring and managing user experience as well as monitoring code. I would say the growth for a lot of these companies is really in the small and medium-sized business, and that’s where New Relic’s pricing model is headed.

New Relic also has a very good roadmap, but the challenge for them will be in enabling some of these machine learning capabilities across their entire stack. They’ve also just redone their user experience over the last year or so, and I think that they are in a good position.

New Relic and Elastic are getting closer to a single pane and Dynatrace is resting on their laurels from a product standpoint. That’s my opinion and perception based on using the tool. New Relic is advancing much further and I think Elastic will also eat their lunch as well.

APM – Transitioning to Support Digital Operations

For years, application monitoring has relied on alerts, logs, traces and even tribal knowledge. In the pre-distributed systems world, this was fine – a trace could tell you what was going on. But things got much more complicated architecturally with cloud and are changing so fast with containers and serverless. So today, it’s much harder to understand the customer experience because it’s difficult to get a full picture of all the data. What we mean by that is, user data, application data and infrastructure data are all fragmented. The grail solution takes all this disparate data, ingests it, transforms it and connects all the dots– across clouds and on premises. Then shapes it with machine intelligence to create an organic systems view and proactively tells you a problem is coming; and even how to fix it…or just fix it for you.

And finally the absolute nirvana is doing this in a way that non-technical people can understand the true user experience. In theory this will allow organizations to remediate problems in one tenth the time with much lower costs.

Many thanks this week to Erik Bradley and the team at ETR. Remember these episodes are all available as podcasts – please subscribe. We publish weekly on Wikibon and Siliconangle so check that out and please do comment on the LinkedIn posts we publish. Don’t forget to check out ETR for all the survey action. Get in touch on twitter @dvellante or email david.vellante@siliconangle.com. And remember, Breaking Analysis posts, videos and podcasts are all available at the top link on the Wikibon.com home page.

Thanks everyone, be well and we will see you next time.

Watch the full video analysis: