Contributing Author: David Floyer

AWS is pointing the way to a revolution in system architecture. Much in the same way that AWS defined the cloud operating model last decade, we believe it is once again leading in future systems. The secret sauce underpinning these innovations is specialized designs that break the stranglehold of inefficient and bloated centralized processing architectures. We believe these moves position AWS to accommodate a diversity of workloads that span cloud, data center as well as the near and far edge.

In this Breaking Analysis we’ll dig into the moves that AWS has been making, explain how they got here, why we think this is transformational for the industry and what this means for customers, partners and AWS’ many competitors.

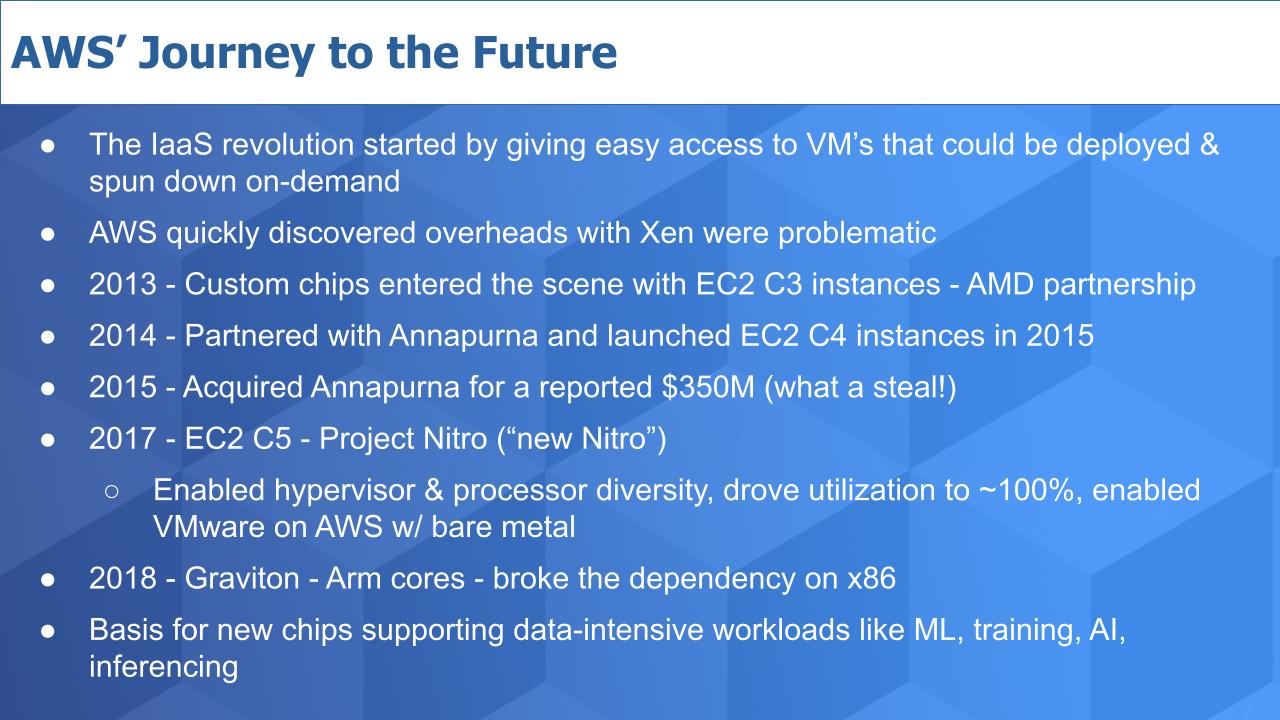

AWS’ Architectural Journey – The Path to Nitro & Graviton

The IaaS revolution started by AWS gave easy access to VM’s that could be deployed and decommissioned on-demand. Amazon used a highly customized version of Xen that allowed multiple VM’s to run on one physical machine. The hypervisor functions were controlled by x86.

According to Werner Vogels, as much as 30% of the processing was wasted, meaning it was supporting hypervisor functions and managing other parts of the system, including the storage and networking. These overheads lead to AWS developing custom ASICS that helped accelerate workloads.

In 2013, AWS began shipping custom chips and partnered with AMD to announce EC2 C3 instances. But as the AWS cloud scaled, Amazon wasn’t satisfied with the performance gains and they were seeing architectural limits down the road.

That prompted AWS to start a partnership with Annapurna Labs in 2014 and they launched EC2 C4 instances in 2015. The ASIC in C4 optimized offload functions for storage and networking but still relied on Intel Xeon as the control point.

AWS shelled out a reported $350M to acquire Annapurna in 2015 – a meager sum to acquire the secret sauce of its future system design. This acquisition led to a modern version of Project Nitro in 2017. [Nitro offload cards were first introduced in 2013]. At this time, AWS introduced C5 instances, replaced Xen with KVM and more tightly coupled the hypervisor with the ASIC. Last year, Vogels that this milestone offloaded the remaining components, including the control plane and rest of the I/O; and enabled nearly 100% of the processing to support customer workloads. It also enabled a bare metal version of compute that spawned the partnership with VMware to launch VMware Cloud on AWS.

Then in 2018, AWS took the next step and introduced Graviton, its custom designed Arm-based chip. This broke the dependency on x86 and launched a new era of architecture, which now supports a wide variety of configurations to support data intensive workloads. These moves set the framework other AWS innovations including new chips optimized for ML, training, AI, inferencing.

The bottom line is AWS has architected an approach that offloaded the work currently done by the central processor. It has set the stage for the future allowing shared memory, memory disaggregation and independent resources that can be configured to support workloads from the cloud to the edge– at much lower cost than can be achieved with general purpose approaches.

Nitro is the key to this architecture. To summarize – AWS Nitro is a set of custom hardware and software that runs on Arm-based chips spawned from Annapurna. AWS has moved the hypervisor, network and storage virtualization to dedicated hardware that frees up the CPU to run more efficiently. The reason this is so compelling in our view is AWS now has the architecture in place to compete at every level of the massive TAM comprising public cloud, on-prem datacenters and both the near and far edge.

Sets the Direction for the Entire Industry

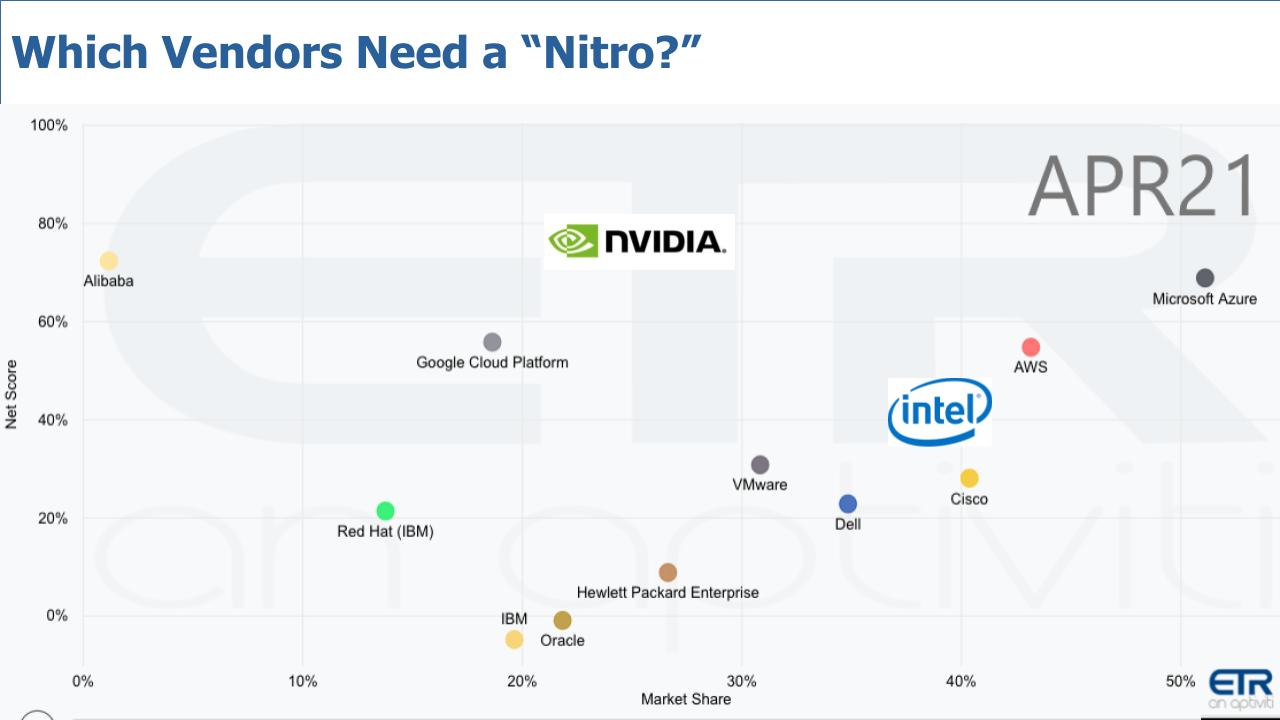

This chart below pulls data from the ETR data set. It lays out key players competing for the future of cloud, data center and the edge. We’ve superimposed NVIDIA and Intel. They don’t show up directly in the ETR survey but they clearly are platform players in the mix.

The data shows Net Score on the vertical axis– that’s a measure of spending velocity. Market Share is on the horizontal axis, which is a measure of pervasiveness in the data set. We’re not going to dwell on the relative positions here, rather let’s comment on the players and start with AWS. We’ve laid out the path AWS took to get here and we believe they are setting the direction for the future.

AWS

AWS is really pushing hard on migration to it’s Arm-based platforms, from x86. Patrick Moorhead at the Six Five Summit spoke with David Brown who heads EC2 at AWS. And he talked extensively about about migrating from x86 to AWS’ Arm-based Graviton 2. And he announced a new developer challenge to accelerate migration to Arm. The carrot Brown laid out for customers is 40% better price performance. He gave the example of a customer running 100 server instances can do the same work with 60 servers by migrating to Graviton2 instances. There’s some migration work involved by the customers but the payoff is large.

Generally, we bristle at the thought of migrations. The business value of migrations is a function of the benefit achieved, less the cost of the migration, which must account for any business disruption, code freezes, retraining and time to value variables. But it seems in this case, AWS is minimizing the migration pain.

The benefit to customers according to Brown is that AWS currently offers something like 400 different EC2 instances. As we reported earlier this year, nearly 50% of the new EC2 instances shipped last year were Arm-based. And AWS is working hard to accelerate the pace of migration away from x86 onto its own design.

Nothing could be more clear.

Intel

Intel is finally responding in earnest to the market forces. We essentially believe Intel is taking a page out of Arm’s playbook. We’ll dig into that a bit today. In 2015, Intel paid $16.7B for Altera, a maker of FPGAs. Also at the Six Five Summit, Navin Shenoy of Intel presented details of what Intel is calling an IPU – Infrastructure Processing Unit. This is a departure from Intel norms where everything is controlled by a central processing unit. IPUs are basically smart NICs as are DPUs – don’t get caught up in the acronym soup. As we’ve reported, this is all about offloading work, disaggregating memory and evolving SoCs – system on chip and SoPs – system on package.

But let this sink in a bit. Intel’s moves this past week – it seems to us anyway – are clearly designed to create a platform that enable its partners to build a Nitro-like offload capability. And the basis of that platform is a $16.7B acquisition. Compare that to AWS’ $350M tuck-in of Annapurna. That’s Incredible.

Now Shenoy said in his presentation “We’ve already deployed IPU’s using FPGAs in very high volume at Microsoft Azure and we’ve recently announced partnerships with Baidu, JD Cloud and VMWare.”

Let’s look at VMware in particular

VMware

VMware is the other really prominent platform player in this race. In 2020, VMware announced project Monterey, which is a Nitro-like architecture that it claims is not reliant on any specific FPGA or SoC. VMware is partnering with and intends to accommodate new technologies including Intel’s FPGA, Nvidia’s Arm-based Bluefield NICs and Pensando’s smart NICs. It is also partnering with Dell, HPE and Lenovo to drive end-to-end integration across the respective solutions of those companies.

So VMware is firmly in the mix. However this is early days and Monterey is a project, not a product. VMware likely chose to work with Intel for a variety of reasons including most software running on VMware has been built for x86. As well, Pat Gelsinger was leading VMware at the time and probably saw the future pretty clearly– the company’s and his own. Despite the Intel connection, the architectural design of Monterey appears to allow VMware to incorporate innovations from other suppliers, including, AMD and Arm-based platforms like Bluefield.

The bottom line is VMware has a project that moves it toward a Nitro-like offering and appears to be ahead of the non-cloud competition with respect to this trend.

But in our view, being the Switzerland of smart NICs is only a first step toward having full control over the architecture as does AWS with Nitro. Specifically we refer to VMware possibly designing an underlying solution optimized for VMWare that separates the compute completely from other components. Perhaps this is the intent but currently the details are sketchy.

The next major step would be to design a custom chip like AWS Graviton. Will VMware make that move? It’s not clear however VMWare doesn’t need to do this in our view because it can partner with the likes of Ampere to accomplish a similar outcome.

Other Hyperscalers

What about Microsoft, Google and Alibaba. Suffice it to say that despite the relationship between Intel and Microsoft, we strongly believe Microsoft and Google, as well as Alibaba will follow AWS’ lead and develop an Arm-based platform like Nitro. It has to in our opinion to keep pace with AWS.

The Rest of the Data Center Pack – Dell, Cisco, HPE, IBM & Oracle

Dell has VMware. Check. Despite the split we don’t expect any real change there. Dell will leverage whatever VMware does and do it better than anyone else. Cisco is interesting in that it just revamped its UCS but we don’t see any evidence that it has Nitro-like plans in its roadmap. Same with HPE. Both of these companies have history and capabilities around silicon– Cisco designs its own chips today for carrier class use cases and HPE, as we’ve reported, probably has remnants of the machine hanging around. But both companies are very likely to follow VMware’s lead and go with an Intel-based design.

What about IBM? Well we don’t really know. We think the best thing IBM could do would be to move the IBM cloud to an Arm-based Nitro-like platform. And we think the mainframe should move to Arm as well. It’s just too expensive to build a specialized mainframe CPU these days.

And if we were in charge of Oracle, we would build, or partner to build, an Arm-based, Nitro-like database cloud. Where Oracle runs cheaper, faster and consumes less energy than any other platform running Oracle. And we’d go one step further and optimize for competitive databases in the Oracle cloud – and just run the table on cloud database. Imagine Snowflake running in the Oracle cloud!

A Word on FPGAs

We’ve never been overly excited about the FPGA market. Admittedly FPGAs are not this author’s wheelhouse, however we’ve never felt these mega acquisitions were justified. Intel’s move with Altera and AMD acquiring Xilinx for $35B. Both of these were inflated in our view, especially when we compare these with AWS’ Annapurna acquisition. We found a nice overview of the FPGA market from The Next Platform, which positions FPGAs as a declining market. We’re not surprised.

At least AMD is using its inflated stock price to do the deal, but we honestly think that the Arm ecosystem will obliterate the FPGA market by making it simpler and faster to move to SoC with far better performance, flexibility, integration and mobility. We see FPGAs as low volume and not nearly as attractive as programmable innovations coming from the Arm ecosystem.

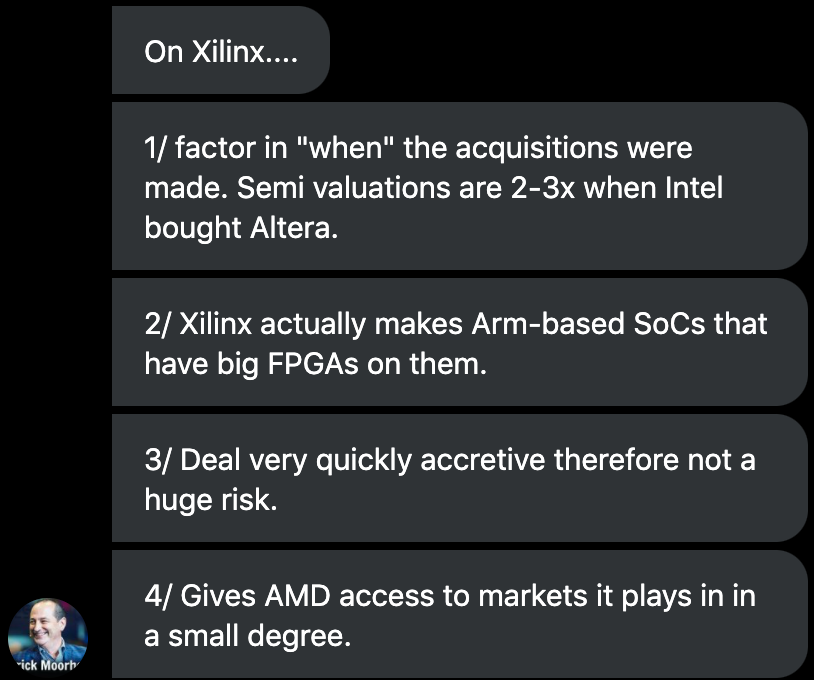

We reached out to Patrick Moorhead to get his perspective on the AMD Xilinx deal – here are his thoughts:

Ok so that’s encouraging feedback from Pat. It looks financially viable given the inflated market conditions and the use of AMD’s stock. We feel that if AMD focuses on integrating Arm components into their designs it could accelerate their business.

We still can’t let go of brilliance of Amazon’s acquisition of Annapurna for $350M. Amazing.

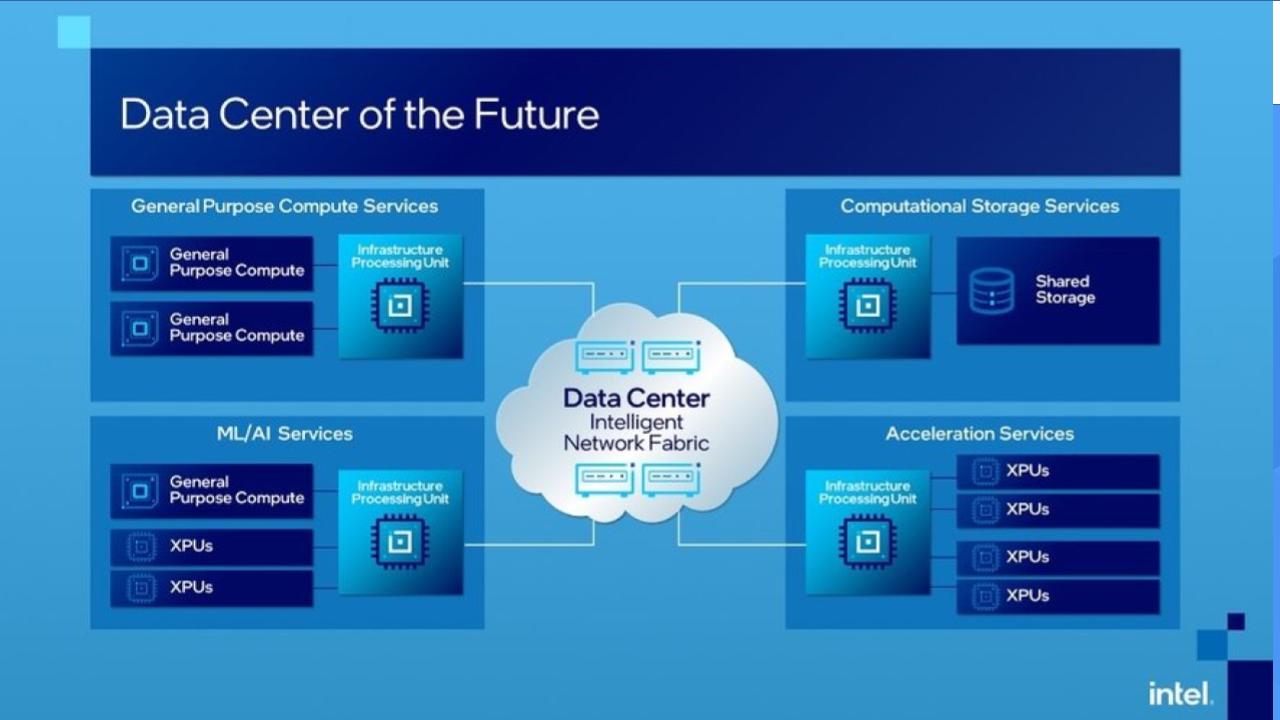

Intel’s Vision for the Data Center of the Future?

Below is a chart that Shenoy showed depicting Intel’s vision of the future:

Let’s break this down. What you see above is the IPUs, which are intelligent NICs embedded in the four blocks shown and communicating across a fabric. General purpose compute is in the upper left and machine intelligence on the bottom left and up top right storage services and then at the bottom right the variation of alternative processors.

This is Intel’s view of how to share resources and go from a world where everything is controlled by a central processing unit to a more independent set of resources that can work in parallel.

And Gelsinger has talked about all the cool tech that this will allow Intel to incorporate, including PCI gen 5 and CXL memory interfaces that enable memory sharing and disaggregation and 5G and 6G connectivity and so forth.

How Does Arm View the Future

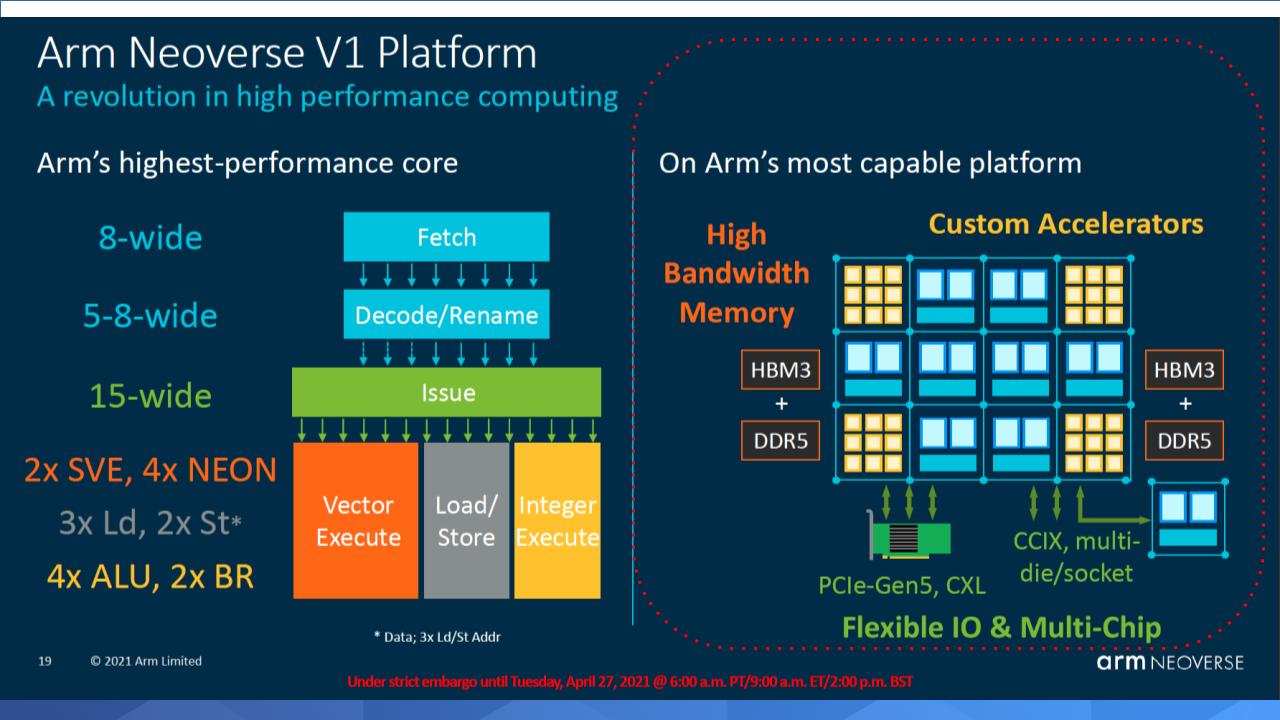

First, Arm marketing tends to be really techie. But there are definite similarities with Intel’s vision as you can see below, especially on the right hand side as highlighted in the red dotted area. You’ve got blocks of different processor types that are programmable. Notice the “High Bandwidth Memory” HBM3 + DDRS on the two sides, bookending the blocks – that’s shared across the system. And it’s connected by PCIe-Gen5, CXL or CCIX, multi-die/socket.

Ok so you maybe are looking at this saying 2 sets of block diagrams – big deal. While there are similarities around disaggregation, implied shared memory and the use of advanced standards, there are also some notable differences.

In particular, Arm is at an SoC level whereas Intel is talking FPGAs. Neoverse, Arm’s architecture, is shipping in test mode and will have product in end markets by late 2022. Intel is talking about 2025 or 2024 at best. Arm’s roadmap is much more clear. Now Intel said it will release more details in October so maybe we’ll recalibrate at that point but it’s clear to us that Arm is way further along.

The other major difference is volume. Intel is coming at this from the high end data center and presumably plans to push down market to the edge. Arm is coming at this from the edge. Low cost, low power, superior price/performance. Arm is already winning at the edge and based on the data we shared earlier from AWS, it’s clearly gaining ground in the enterprise.

History strongly suggests that the volume approach will win.

Implications for Customers & the Ecosystem

Let’s wrap by looking at what this means for customers and the partner ecosystem.

The first point we’d make is follow the consumer apps. The capabilities in consumer apps like image processing, NLP, facial recognition, voice translation – these inference capabilities going on today in mobile will find their way into the enterprise ecosystem.

Ninety percent of costs associated with machine learning in the cloud are around inference. In the future, much of the AI in the enterprise, and most certainly at the edge, will be real-time inference. It’s not happening today in the enterprise because it’s too expensive and immature outside of consumer use cases. This is why AWS is building custom chips for inferencing. It wants to drive costs down and increase adoption.

The second point is you should start experimenting and see what you can do with Arm-based platforms. Moore’s Law is accelerating and Arm is in the lead in terms of performance, price performance, cost and energy consumption. By moving some workloads onto Graviton for example, you’ll see what types of cost savings you can drive. And possibly new applications you can deliver to the business. Put a couple of engineers on the task and see what they can do in two or three weeks time. You might be surprised or you might say it’s too early for us – but find out – you may strike gold.

We would also suggest that you talk to your hybrid cloud provider and find out if they have a Nitro. We shared that VMware has a clear path. What about your other strategic suppliers? What’s their roadmap? What’s the timeframe to move from where they are today (faster boxes every two years with a professional services-led as-a-service pricing model) to something that resembles Nitro and a much more attractive software model? How are they thinking about reducing your costs and supporting new workloads at scale?

And for ISVs – these consumer capabilities we discussed earlier – all these mobile and automated systems in cars now and things like biometrics. These machine intelligence capabilities are going to find their way into your software. And your competitors are porting to Arm, actively. They’re embedding these consumer-like capabilities into their apps. Are you? We would strongly recommend you take a look at that, talk to your cloud suppliers and see what they can do to help you innovate, run faster and cut costs.

Doing nothing and watching to see how the market evolves is a viable strategy sometimes. We don’t think this is one of those cases.

Keep in Touch

Remember these episodes are all available as podcasts wherever you listen.

Email david.vellante@siliconangle.com | DM @dvellante on Twitter | Comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail.

Watch the full video analysis:

Image credit: alepatika23

Note: ETR is a separate company from Wikibon/SiliconANGLE. If you would like to cite or republish any of the company’s data, or inquire about its services, please contact ETR at legal@etr.ai.