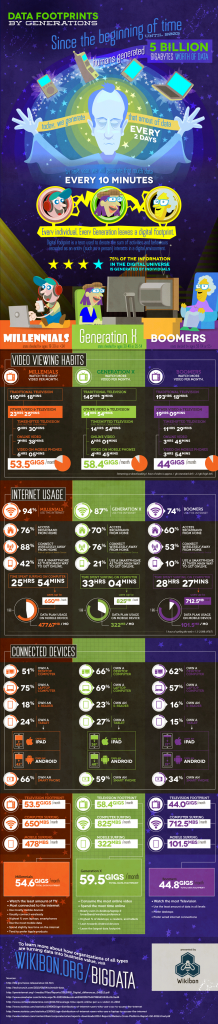

As data is continuously collected and created, companies have difficulty just storing it, missing any opportunity to leverage the information. The wave of big data has the potential to flip the burden of data management into the opportunity of new value creation. Yesterday’s solutions don’t accomplish this today and will be even less effective tomorrow.

While the volume of data has grown exponentially over the last few decades, the fundamental and underlying technology on which we store data hasn’t. Sure, we’ve had improvements in densities (to store more data) and connectivity (to provide better access to data), but the pace of data growth has overwhelmed the benefits of these technological advancements.

For the first time in history, data operating at memory speeds (cost effectively) is a reality due to flash memory (see Wikibon’s flash infographic). Importantly, this trend is not just about the elimination of spinning media – that’s important and enabled by persistence – but is also about the degree to which the infrastructure around spinning disk is outdated. Namely protocols (e.g., SCSI) that assume slow, spinning disks are the primary storage medium, which introduce latencies that are untenable.

What’s most interesting about the big data explosion, and the role of flash, is the degree to which value will be created from data. Specifically, there is the opportunity to significantly impact organizational productivity and the potential to disrupt entire industry structures. See Wikibon’s Data-in-Flash, Optimizing Database Deployment and Designing Systems and Infrastructure in the Big Data IO Centric Era articles for more guidance on solutions that drive towards this new paradigm.

Much more on this topic which is at the intersection of Wikibon’s two primary research initiatives for 2013:

Big Data and Software-led Infrastructure (where Flash-based persistent storage is one of the key disruptive technologies).