The Central Role of the Data Center

Data centers are at the center of modern software technology, serving a critical role in the expanding capabilities for enterprises. Whereas businesses just a few decades ago relied on error-prone paper-and-pencil documentation methods, data centers have vastly improved the usability of data as a whole. They’ve enabled businesses to do much more with much less, both in terms of physical space and the time required to create and maintain data. But data centers are positioned to play an even more important role in the advancement of technology with new concepts entering the landscape that represent a dramatic shift in the way data centers are conceived, configured and utilized.

The Traditional Data Center Rife with Shortcomings

The traditional data center, also known as a “siloed” data center, relies heavily on hardware and physical servers. It is defined by the physical infrastructure, which is dedicated to a singular purpose and determines the amount of data that can be stored and handled by the data center as a whole. Additionally, traditional data centers are restricted by the size of the physical space in which the hardware is stored.

Storage, which relies on server space, cannot be expanded beyond the physical limitations of the space. More storage requires more hardware, and filling the same square footage with additional hardware means it will be more difficult to maintain adequate cooling. Therefore, traditional data centers are heavily bound by physical limitations, making expansion a major undertaking.

The first computers were very similar to the traditional data center we know today. Siloed data centers, as they’re used today, exploded during the dot-com era in the late 1990’s and early 2000’s. The rapid increase in the number of websites and applications required physical storage somewhere to house the vast amounts of data required to make those programs run on-demand in the user’s browser.

Early data centers offered sufficient performance and reliability, especially considering there was little point of reference. Slow and inefficient delivery was a prominent challenge, and utilization was astonishingly low in relation to the total resource capacity. It could take an enterprise months to deploy new applications with a traditional data center.

Evolution to the Cloud

Between 2003 and 2010, virtualized data centers came about as the virtual technology revolution made it possible to pool the resources of the computing, network and storage from several formerly siloed data centers to create a central, more flexible resource that could be reallocated based on needs. By 2011, about 72 percent of organizations said their data centers were at least 25 percent virtual.

Behind the virtualized data center is the same physical infrastructure that comprised a traditional, siloed data center. The cloud computing technology both enterprises and consumers enjoy is driven by the same configurations that existed prior to server virtualization – but with greater flexibility thanks to pooled resources.

Server Virtualization Shortcomings Reveal Hidden Opportunity

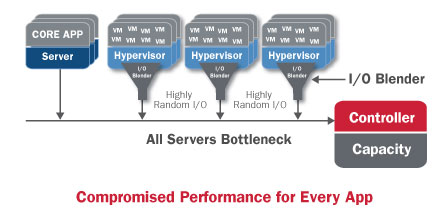

As technology is always pushing forward, the pooling of resources to create larger, more-flexible, centralized pools of computing, storage and networking resources doesn’t quite represent utopia in terms of data center design. The individual siloes – known as server farms – being pooled must still be maintained and managed separately.

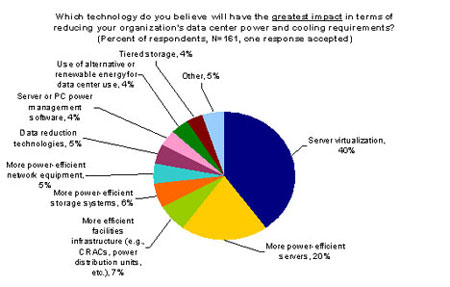

A major challenge in IT today is that organizations can easily spend 70 percent to 80 percent of their budgets on operations, including optimizing, maintaining and manipulating the environment. Server virtualization offered the benefit of better utilization of compute cycles but actually had detrimental impacts on the storage and networking components.

It makes sense for enterprises to move to environments that are easier to manage and require less resources to maintain. There are two main options: Simplify the environment, either through a converged infrastructure or by moving to a ready-made data center, or outsource all or parts of the environment to a third-party cloud provider which would then take on some or all of the maintenance burden. For IT, server virtualization wasn’t the answer, but a stepping-stone to the ultimate solution.

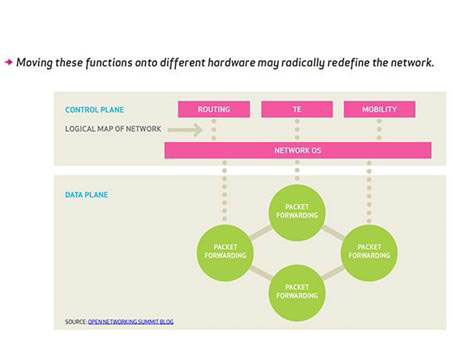

Envisioning Software-Led Infrastructure

Server virtualization provided a taste of what would ultimately be possible. Better utilization is realized when application workloads are at ideal levels. But as a shared resource pool, a sudden, unexpected increase in one application’s utilization means a shortage of available resources for the rest of the applications relying on the same resources.

This means that guest operating systems, or those not native to the data center, could experience a shortage of compute resources. When this occurs, service-level agreements, which typically guarantee server uptimes of close to 100 percent and guarantee the availability of resources, have basically been broken.

Programmatically controlling resource allocation to applications made it clear that compute and server resources benefit from increased utilization in the data center environment, but it’s not enough to handle the dynamic workloads of today’s businesses. Most data centers serve a mix of compute-intensive applications and simpler applications that handle important but less demanding tasks associated with running a business.

The Best of Virtualization and Automation

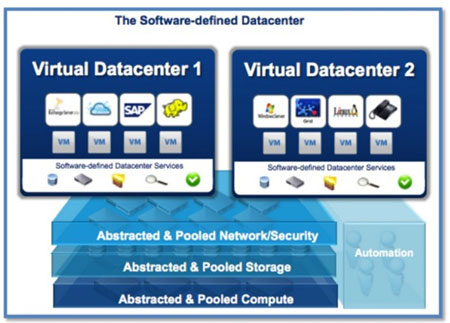

Enter software-led infrastructure (SLI), which aims to capitalize on virtualization technology to take advantage of all the benefits of the virtual data center while enabling portability, functionality, storage and automation of applications both within and between data centers.

The key defining feature of SLI is that total control is exerted by software. That includes automating control of deployment, provisioning, configuring and operation with software, creating one centralized hub for monitoring and managing a network of data centers. Software also exerts automated control over the physical and hardware components of the data center, including automating power resources and the cooling infrastructure, in addition to the networking infrastructure.

Without these vital components, a data center wouldn’t function – and instead of failing one application due to a shortage of compute resources, every application relying on the data center would fail to function. SLI is capable of supporting today’s dynamic workloads by coupling automation with the cloud’s elasticity, which can shrink or expand its capacity based on the demands of the current workload.

Capabilities of SLI

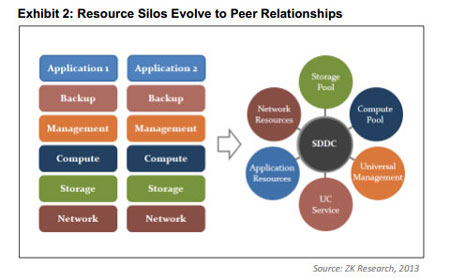

Handling dynamic workloads with automated resource allocation and relieving IT burdens with automated control over physical and network infrastructures aren’t the only capabilities of SLI, also known as a software-defined data center (SDDC), a term coined by VMware’s former CTO, Dr. Steve Herrod. SLI is capable of supporting legacy enterprise applications as well as cloud computing services.

There are currently more virtual than physical workloads deployed within the business landscape. Those workloads require a correlational amount of resources, which would create a significant burden on IT when relying on traditional data centers. But SLI makes resources more fluid and adaptable, so they can be more easily reallocated and shared, not only by applications sharing the resources of a single data center, but even between data centers.

This means resources can be reallocated to different business units seamlessly and as-needed to meet the overall needs of the organization. And with this model, service components shift from being distinct silos to efficient, collaborative peers.

The Future of the Data Center

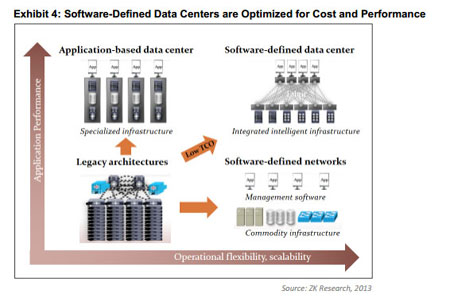

SLI is still in its infancy but is considered the next step in the evolution of data centers, virtualization and cloud computing. The combination of server virtualization, software-defined networks (SDN), software-defined storage (SDS) and automation will enable the creation of a truly dynamic, virtualized data center. SDN and SDS are still new technologies that haven’t yet been firmly established in the data center landscape, but they will have a place in the ultimate vision of the fully-automated, fully-dynamic SLI. Also see Wikibon’s new category – Server SAN – which is an architecture of highly scalable converged compute and storage.

Most importantly, this new model doesn’t fit the traditional equation of more capabilities requiring more resources – physical resources, time, financial resources and more. Instead, SLI enhances functionality and efficiency while reducing the IT and operational burden through automation.

Transitioning to SLI

IT must find more efficient ways to deliver services. SLI facilitates the transition from IT as a cost center to IT as a facet that drives business growth. Broader agility, more flexibility, and an immediate response to business requirements enables a close alignment between IT and business goals – which traditionally can be drastically different.

Benefits of SLI

SLI creates a significantly shorter provisioning time, reducing the typical timeframe of weeks or months to mere minutes. It also completely eliminates business silos, meaning greater visibility across the organization and increased awareness of other areas and requirements.

With full automation and a single, centralized point of control, SLI results in drastically improved resource utilization. In the traditional data center, or the legacy infrastructure, resource utilization maxes out around 30 percent. This is slightly improved with the virtual data center, enabling resource utilization of about 50 percent. SLI will increase this significantly with up to a 70 percent utilization of resources, far more efficient than the former models.

Will Data Centers Ever Exist Exclusively in the Cloud?

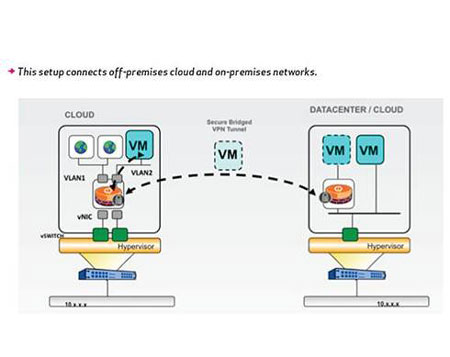

It may seem as though the ultimate goal is for data centers to exist exclusively in the cloud, requiring no physical infrastructure at all. In addition to potentially being a practical impossibility, it’s not actually a desirable solution. Currently, most enterprises have at least some data that should never be stored off-site. That’s precisely why most enterprises presently use a mix of on-premise and cloud data storage and services.

Businesses are increasingly relying on services offered via the cloud, however. On average, a business relies on 545 cloud services in the course of doing business. And as more cloud services become available, businesses are shifting more processes to the cloud, such as email, instant messaging, and office suite cloud applications such as Google Apps.

Future data centers will, however, have a much smaller physical footprint as more components of the data center are virtualized. A smaller set of basic hardware may contain hundreds to thousands of virtual servers and machines, creating practically limitless resources with resource pooling and automation.

Data Center Technologies

Software-led infrastructures have several critical components. One of the defining features of SLI is that all the components necessary to create a functional data center still exist, but they’re virtualized. That means servers, networks, and storage make use of virtualizationto create an elastic, adaptable resource pool to accommodate the dynamic workloads of today’s enterprises.

Network virtualization splits the available bandwidth into channels, each of which can be assigned to a specific device or server in real-time. All channels operate independently of one another, although they actually exist within the same pool of bandwidth.

Server virtualization involves masking server resources from users, which alleviates the user from having to understand and manage the server’s resources. For enterprises, this means reduced IT demands. It also increases resource sharing and boosts utilization, while allowing for later expansion.

Storage virtualization pools the available storage from multiple network storage devices, creating a centrally-managed pool of storage that can be re-allocated to applications (and ultimately users) to accommodate changing demands. While it’s actually a group of network storage devices, central control and management allows virtualized storage to function as a single entity.

Implications of Virtualization

Server virtualization is not a new technology. In fact, it’s been central to the data center for more than a decade. Server virtualization itself was a significant step forward for data center technology, enabling a level of resiliency, agility and automation not possible within the constraints of the physical, on-premise framework.

Bringing the benefits of virtualization to every layer of the data center framework is one of the key features of SLI. This brings the desirable attributes, including resiliency, agility and automation, to the complete application delivery stack – from networks all the way down to applications – in a way that not only maximizes, but expands the available resources.

One essential technology that enables the creation of SLI is the flash hypervisor. This is the key technology that virtualizes storage-side flash, enabling rapid expansion of storage capacity to accommodate demands. The flash hypervisor aggregates all the available flash storage into groups, facilitating advanced speed and performance.

The scaling-out possible with the flash hypervisor is completely independent of storage capacity. In other words, there’s no need to expand the existing storage infrastructure. Much like the standard hypervisor accomplishes for CPU and RAM, the flash hypervisor abstracts physical storage on server flash devices into a resource pool.

Infrastructures and Automation

At the foundation of all of this intangible technology lies the physical infrastructure. This is still a critically important component of SLI – without the physical servers and hardware, components like storage and networking cannot be abstracted from the physical underpinnings in order to pool collective resources.

But the differentiating factor in all of this is that while the physical infrastructure still exists, control and management of the physical infrastructure is entirely automated via software – hence the term software-led infrastructure. But automation also extends beyond the physical components to the resources required to power the physical infrastructure. That means power itself, as well as cooling, essential for maintaining uptimes and fully-operational services.

The automation that defines SLI enables the dynamic control of resources which willeliminate the need to expand the physical infrastructure to accommodate growing demands. This is the hallmark of SLI: the ability to scale and accommodate dynamic workloads without the significant investments in reconfiguration and hardware required in the legacy model.

Key Players in the Current Data Center Landscape

There are several key players in the current data center landscape, many of them providing IaaS or SaaS services to enterprises who want to reduce their overhead costs and the demands on in-house IT by sharing data center resources with other businesses.

Those offering IaaS and SaaS manage the data center’s physical infrastructure and virtualized servers for all subscribers, making these options attractive for budget-constrained startups and actually reducing the barriers to entry in many high-tech fields.

Top-Tier Data Center Players (Mega Clouds)

1. Amazon

With Amazon Web Services, users can “launch virtual machines or apps within minutes,” a drastic reduction in provisioning time compared to traditional models. Through a collection of remote computing services, Amazon Web Services creates and offers a cloud computing platform to subscribers.

2. Google Cloud Platform

An obvious contender as one of the largest data center players, Google offers options viaGoogle Cloud Platform and Google Compute Engine. Google’s Cloud Platform enables developers to build, test and deploy applications on the same infrastructure that powers Google’s vast capabilities. Google Compute Engine offers 99.95 percent monthly SLAs with 24-7 support.

3. Microsoft – Windows Azure

Microsoft rounds out the big three when it comes to major players in the cloud computing space with Windows Azure, offering the services of Microsoft-managed data centers in 13 regions around the world. With per-minute billing and built-in auto-scaling, it proves to be a cost-efficient platform to build and deploy applications of any size.

Other Data Center Players

The options aren’t limited to world leaders like Amazon, Google and Microsoft when it comes to cloud computing, however. There are plenty of second-tier cloud computing providers meeting the needs of small businesses, startups and major enterprises across the globe.

1. Rackspace

Rackspace has been in the cloud computing space since its very early inception, having previously provided legacy data center infrastructures to enterprises. Today, Rackspace enables customers to create a customized hybrid cloud solution to closely match business goals and requirements.

2. Facebook

Facebook isn’t generally thought of as being heavily involved in the data center space. As the company is one of the world’s largest storers of data, it’s becoming increasingly more influential when it comes to cloud computing. Facebook is a different type of cloud service in that it doesn’t rent out its infrastructure for use by other enterprises, but offers a cloud service to the end user as its primary business. Facebook users who post updates, comments and photos are storing their data in the cloud – powered by Facebook’s massive data centers. The company is influencing the space by implementing data centers powered by wind and another custom-built data center utilizing cold-storage technology to reduce the environmental burden of cooling costs. Facebook also kicked off the Open Compute Project which is creating less expensive scalable infrastructure based on standardized hardware.

3. VMware

VMware offers several configurations of cloud computing services. It was VMware which coined the term software-defined data center, and the company’s present hybrid offering does incorporate some SDDC functionality. From Cloud Operations Services to the VMware vCloud® Suite private cloud infrastructure and vCloud Hybrid Services (vCHS) enterprises can choose from a range of products to design the proper cloud configuration for their business needs.

4. IBM Softlayer

IBM Softlayer is the computing giant’s foray into the cloud environment, making cloud computing simple for enterprises with a single API, portal and platform to control and manage the company’s data and applications. Choose from dedicated or managed services, along with a robust selection of solutions and customization options.

5. GoGrid

GoGrid facilitates the horizontal scaling big data requires through a set of solutions which can be used in a variety of combinations to create customized cloud computing and data center environments. There’s no one-size-fits-all solution for handling massive amounts of data, making these customizations ideal for enterprises with big data needs.

6. Century Link Technology Solutions (formerly Savvis)

Century Link Technology Solutions, formerly known as Savvis, offers a comprehensive suite of cloud services, powering some of the world’s largest brands. From application outsourcing to infrastructure, storage, managed hosting and colocation, Century Link Technology Solutions offers the technology needed for the end-to-end needs of modern businesses.

7. Verizon Terremark

Terremark is Verizon’s cloud service, serving enterprises and the federal government with IT infrastructure, cloud services, managed hosting and security solutions. Flexible data protection and some of the industry’s most iron-clad security are hallmarks of Verizon’s cloud computing offerings.

8. CSC CloudCompute

CSC CloudCompute offers IaaS, hybrid cloud management, SAP, mail and collaboration, based on leading technologies that accelerate development, mitigate risk and streamline migration. CSC uses its customized WAVE approach to accelerate application migration, an ACE Factory for application cloud enablement, and the ability to x86 migrate cloud-ready workloads to CSC CloudCompute, CSC BizCloud and CSC BizCloud VPE.

9. Brocade

Brocade, as a networking provider, aims to provide an infrastructure configurable by and responsive to the emerging provisioning frameworks emerging as new technologies. By providing quality service to virtual machines that rivals what was previously possible with the physical infrastructure positions Brocade as a company that will successfully ride the evolution to SLI.

10. Alcatel-Lucent

Alcatel-Lucent is another cloud services provider helping to pave the way for a seamless evolution to the new, physically-independent data center infrastructure. The company’sNuage Networks™ product portfolio offers software-defined networking – still a relatively new technology in SLI landscape. Nuage Networks Virtualized Services Platform (VSP) works with an enterprise’s existing network to expand its capabilities through scalability, automation and optimization. Alcatel-Lucent also offers a number of other technology solutions powering a variety of enterprises and industries.

11. ProfitBricks

ProfitBricks optimizes the price/performance ratio through fully scalable cloud computing solutions for developers, startups and other enterprises. A second-generation cloud-computing provider, ProfitBricks offers IaaS built on Cloud Computing 2.0. With independently customized servers, cloud storage and cloud networks, coupled with an easy data center design tool, ProfitBricks reduces barriers to entry both in terms of cost and usability for startups and other businesses that wouldn’t otherwise be able to capitalize on the power of the cloud.

12. Dell Cloud Dedicated

Dell, traditionally known for its physical computing devices, also provides private cloud IaaS hosted in a secure Dell data center. Dell Cloud Dedicated uses VMware-based IaaS with multiple infrastructure and network connectivity options, data protection through local backup and recovery and other measures, server colocation, environmental controls compliant with HIPAA and HITECH Act privacy and security requirements, and a variety of other options and benefits.

13. Bluelock

Virtual data centers, cloud management, hybrid cloud and managed services makeBluelock a versatile service provider for enterprises with varying cloud computing requirements. Coupled with Recovery-as-a-Service (RaaS), Bluelock is both a secure and scalable solution offering on-demand resources to power applications and handle the shifting needs of the modern enterprise. Bluelock’s virtual data centers are based on VMware vCloud® technology and are hosted in the public cloud.

14. Stratogent

Expanding the capabilities of in-house IT teams, Stratogent provides a suite of managed services and supports server, storage, cloud, and network and security technologies from multiple third-party providers, such as Rackspace and Amazon Web Services.

15. Joyent Manta

Joyent has introduced Joyent Manta Storage Service, a scalable object storage service with integrated compute capabilities via Joyent Compute. Instead of extracting data for computation, Joyent Compute brings the compute to the data for flexible analytics. Joyent also provides related technologies, including a private cloud, all of which integrate seamlessly for customized solutions.

These 18 companies represent some of the most influential players in the current cloud computing landscape. Several are poised to navigate enterprises to the next wave of data center technology to provide powerful cloud computing and data center solutions offering unparalleled scalability, efficiency and networking capabilities.

OpenStack: Multiple Distribution Options for Production Deployment

There’s another entity that’s widely used in the cloud computing landscape. OpenStack is primarily considered an open-source project. While the ability to download, modify and deploy custom applications – and even distribute them – with no licensing fees is attractive, this option means the enterprise takes full responsibility for the deployment, management and support.

Several vendors saw the opportunity and built production-ready platforms based on the OpenStack source code. Even enterprises with internal engineering and development teams often lack the substantial resources required to develop, package and support a proprietary OpenStack-based platform.

The result is a number of packaged, production-ready products powered by OpenStack but enhanced with value-added benefit, such as configuration management tools, software support and integration testing. A few vendors in this space include:

- Mirantis OpenStack

- Piston OpenStack

- Rackspace Private Cloud

- Red Hat Enterprise Linux OpenStack Platform

Another way to take advantage of OpenStack is via an OpenStack-powered public cloud, such as HP Public Cloud or Rackspace Public Cloud. Another option is a private cloud with the OpenStack platform, such as Rackspace Private Cloud or Blue Box Hosted Private Cloud. The advantage to either the public or private as-a-Service cloud options is that they reduce the demands of managing and supporting the underlying infrastructure, freeing up the enterprise’s resources for application development.

Limitations of the Current Data Center Environment

The current data center environment has its share of shortcomings, even as new technologies have improved traditional models in terms of capacity, utilization and efficiency. But enterprises still require greater flexibility. According to Forbes, enterprise architecture is shifting from optimizing systems for current loads to creating a foundation for more flexible environments that can be redesigned and restructured without necessitating an infrastructure rebuild.

Additionally, enterprise architecture requirements can vary significantly based on industry and business model. The requirements for an e-commerce enterprise may look different from those of an enterprise in the trading or government contracting verticals, for example. This doesn’t always prevent these enterprises from tapping into the same resource pool, but it can necessitate that an organization configure its own private, on-premise data center – which carries significantly higher costs than utilizing the virtual data centers available for SaaS, IaaS and simple data storage.

Another major shortcoming of the existing data center landscape is that a significant disjoint exists between IT requirements and objectives and business goals. As mentioned previously, there’s a shift from IT essentially being a major operational expense to it being a valuable resource that can help advance business goals.

The biggest point of contention is often who should ultimately control the final architecture. The only workable solution, at present, is to obtain input from both facets of the organization. After all, only they know what they need from the data environment.

But this still doesn’t alleviate the entire challenge. Even IT leaders disagree on what the ideal data center actually looks like, and therefore how the architecture should be built. There are two general options when it comes to EA:

- Flat – Creates more balanced functionality across business units,

- Layered – Layered on business, technology and user planes, but offering more centralized management and control.

The bottom line in the current debate often comes down to whose needs are more important: IT, or the business units.

Cloud Computing Landscape and the Data Center

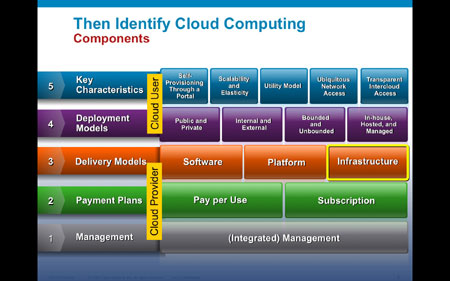

The Enterprise Architect defines six layers of cloud platforms, listed from the top-down:

- SaaS – Layer 6

- App Services

- Model-Driven PaaS

- PaaS

- Foundational PaaS

- SDDC/SLI – Layer 1

As you can see, SLI is poised to become the foundational layer of cloud computing, but this layer may currently exist as any modern data center configuration. Layers one through five are all required to run an application in the cloud.

There are three aspects – or functions – associated with each layer:

- Compute (compared to the application’s or software’s behavior),

- Communicate (compared to the software’s messaging),

- Store (compared to the software’s state).

In SLI, each of these functions is abstracted or isolated from the physical hardware. All components would therefore be built on the virtualization layer created by SLI, and they would all consist of software.

Currently, then, the data center is not completely virtualized. Services such as IaaS and SaaS build on the virtualization components of the data center to make data more accessible. But cloud computing is ultimately changing the way we think about data centers. It’s changing the way data centers are structured and managed. It’s also creating new technologies that apply the same virtualization principles of the cloud to the other components of a data center in the form of SDS and SDN.

Data centers are moving towards a model of efficiency and better scalability. There’s a ton of pressure on data centers to lower cost margins and offer reduce profit per volume, partly due to the affordable alternatives created by IaaS and SaaS. By 2018, 30.2 percent of computing workloads are expected to run in the cloud. As of 2012, surveys indicated that 38 percent of businesses were already using the cloud, and 28 percent had plans to either initiate or expand their use of the cloud.

Data Centers and the Cloud: The Chicken or the Egg?

What’s intriguing to many about the data center’s relation to cloud computing is that it creates a chicken-or-the-egg phenomenon. While a data center is required to create a cloud computing environment, data centers are capitalizing on the virtualization technology behind the cloud to create even more efficient and dynamic data centers.

With any data center, the physical infrastructure exists somewhere. And it will continue to exist even in the fully-virtualized SLI of the future – but on a much smaller scale, and more easily managed through automation. Virtualization and resource pooling enables more efficient use and increases resource utilization, reducing the environmental footprint of the physical infrastructure.