A better than expected earnings report in late August got people excited about Snowflake again but the negative sentiment in the market has weighed heavily on virtually all growth tech stocks. Snowflake is no exception. As we’ve stressed many times, the company’s management is on a long term mission to simplify the way organizations use data. Snowflake is tapping into a multi-hundred billion dollar total available market and continues to grow at a rapid pace. In our view the company is embarking on its third major wave of innovation, data apps, while its first and second waves are still bearing significant fruit. For short term traders focused on the next 90 or 180 days, that probably doesn’t matter much. But those taking a longer view are asking, should we still be optimistic about the future of this high flier or is it just another over-hyped tech play?

In this Breaking Analysis we take a look at the most recent survey data from ETR to see what clues and nuggets we can extract to predict the near future and the long term outlook for Snowflake.

Tough Sledding for Snowflake Bulls

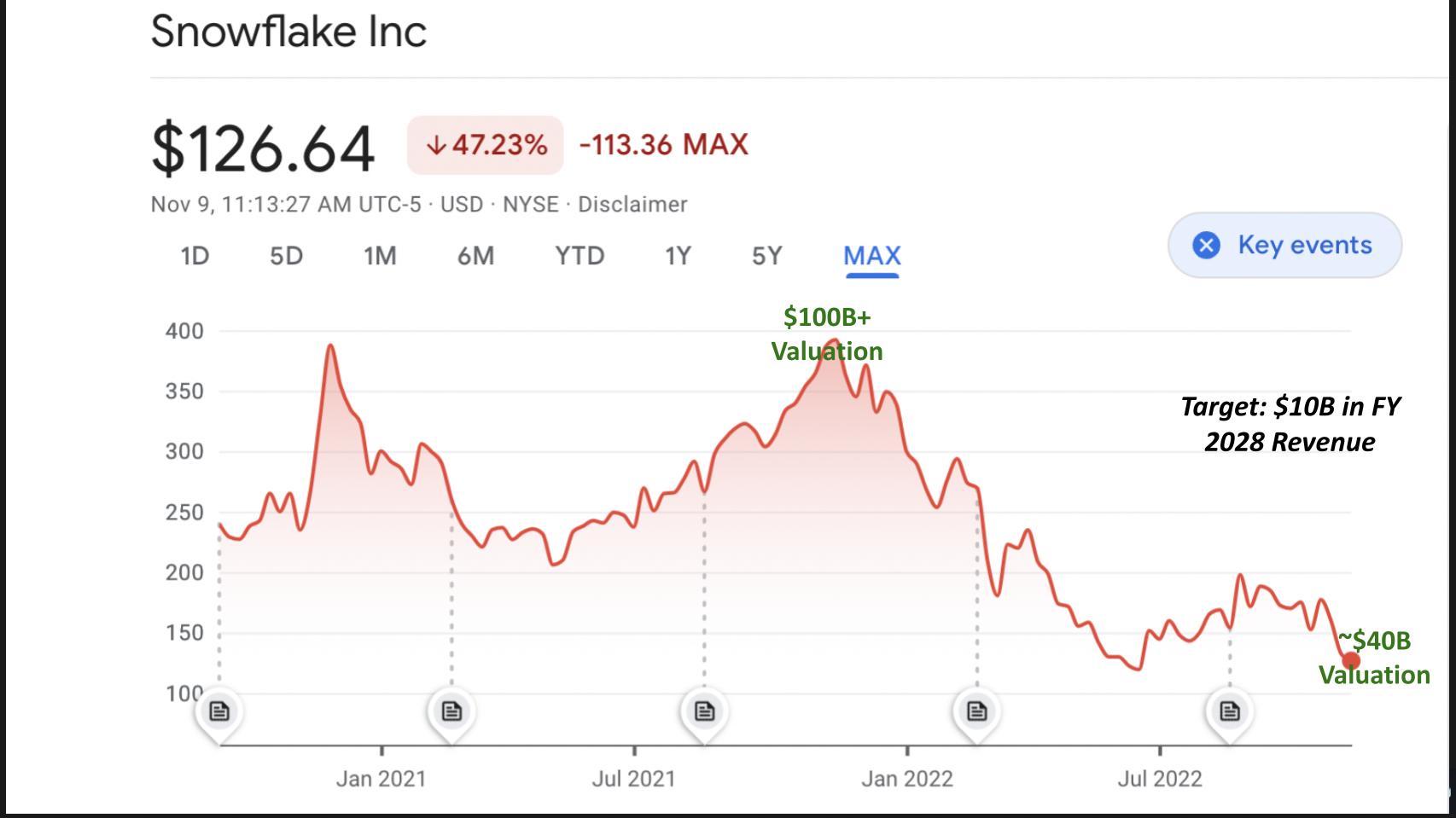

You know the story. If you’ve been an investor in the snowflake this year it’s been painful. We said at IPO, if you really want to own the stock on day one you’d better hold your nose and just buy it. But like most IPOs there will likely be better entry points in the future. And not surprisingly that’s been the case. Snowflake IPO’d at a price of $120, which you couldn’t touch on day one unless you got into a friends and family offer. And if you did you’re still up 5% or so. Congratulations. But at one point last year you were up well over 200%. That’s been the nature of this volatile stock and we certainly can’t help you with timing the market.

What the Short-term Trader Thinks

We asked our expert trader and Breaking Analysis contributor, Chip Symington for his thoughts on Snowflake. He said he got out of the stock a while ago after having taken a shot at what turned out to be a bear market rally. He pointed out that the stock had been bouncing around the 150 level for the last few months and broke that to the downside last Friday (11/4). So he’d expect 150 is where the stock will find resistance on the way back up. But there’s no sign of support right now. Maybe at 120 which was the July low and the IPO price. Perhaps earnings will be a catalyst when Snowflake announces on November 30th but until the mentality toward growth tech flips, nothing’s likely to change dramatically according to Symington.

You had me at $10B

Longer term Snowflake is targeting $10B in revenue for FY 2028. That’s what’s most interesting in our view. It’s a big number. Is it achievable? Many people are asking why isn’t the target even more aggressive?

Tell you what – Let’s come back to that topic a bit later in the post.

Spending Patterns have Softened but are Still Strong for Snowflake

So now that we have the stock talk out of the way, let’s take a look at the spending data for Snowflake in the ETR survey.

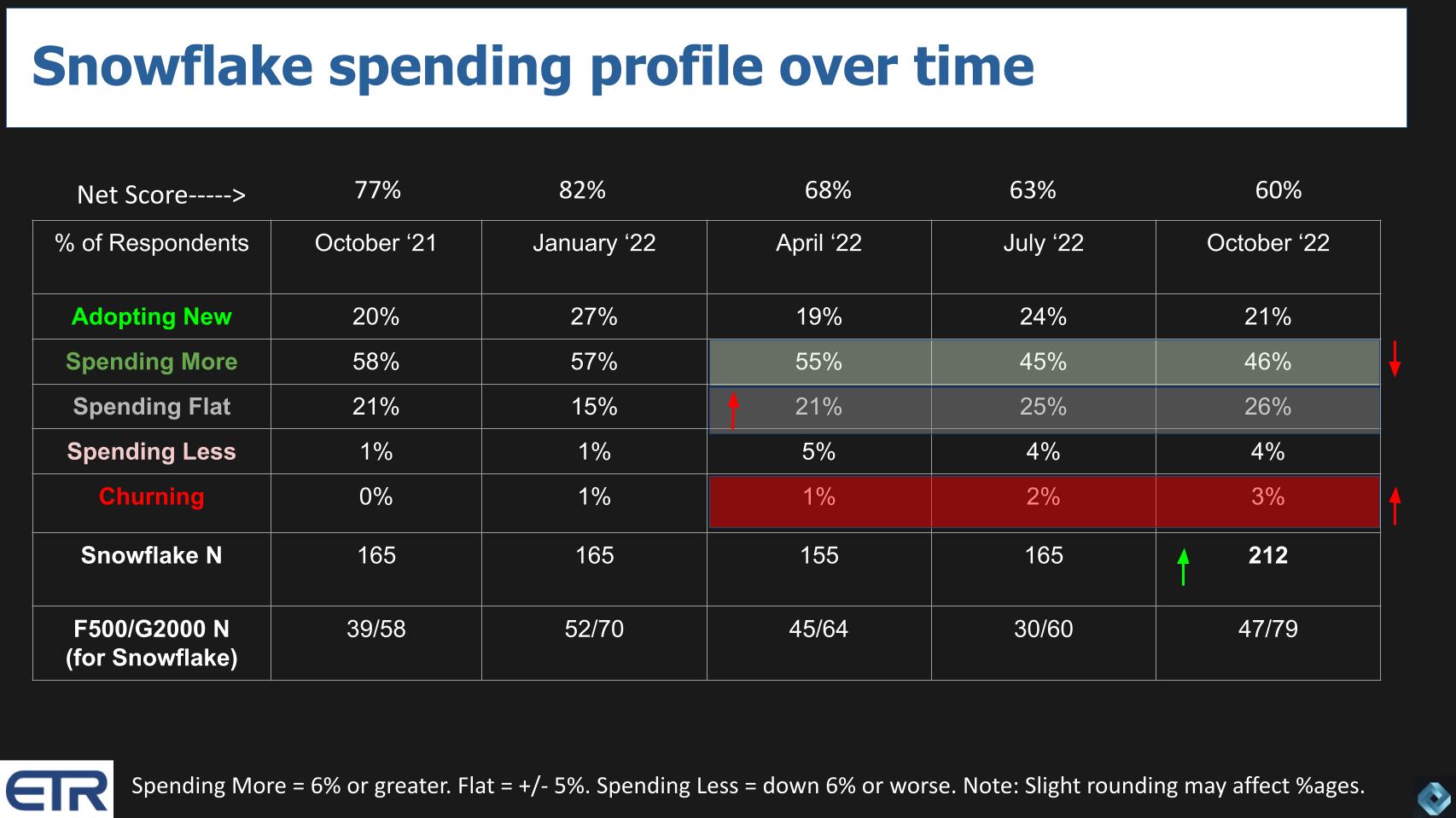

First let’s explain ETR’s proprietary methodology. Net Score is a measure of spending velocity. It’s derived from a quarterly survey of IT buyers (N ranges from 1,200 to 1,400) and asks the respondents: 1) Are you adopting a platform new; 2) Spending 6% or more; 3) Spending flat levels; 4) Cutting spend by 6% or more; or 5) Leaving the platform.

Subtract the percent of customers spending less or churning from those spending more or adopting and you get a Net Score, expressed as a percentage of customers responding specific to a platform.

The chart above shows the time series and breakdown of Snowflake’s Net Score going back to the October 2021 survey. At that time Snowflake’s Net Score stood at a robust 77%. The action in the survey after that time frame has been notable. In the chart we show Snowflake’s N out of the total survey for each quarter. We also show in the last row, the number of Snowflake respondents from the Fortune 500 and the Global 2000 – two important Snowflake constituencies.

What this data tells us is the following:

- Snowflake exited 2021 with very strong momentum and a Net Score of 82% which was actually accelerating relative to the previous quarter;

- By April that sentiment had flipped and Snowflake came down to earth with a 68% Net Score. Still highly elevated relative to its peers but meaningfully down. Why?

- Because we saw a drop in new adds and an increase in the percentage of customers keeping spending flat spend. Sentiment was turning cautious and customers can dial down their cloud spend as they need to (even though they’re committed to spend over the term);

- Then into the July and most recent October surveys you saw a significant drop in the percentage of customers spending more;

- Notably, the percentage of customers who are contemplating adding the platform is staying strong but is off a bit this past survey. All combined, with a slight uptick in planned churn, Snowflake’s Net Score is now down to 60%. Still 20 percentage points higher than our highly elevated benchmark of 40%. But down. And while 3% churn is very low, in previous quarters we’ve seen Snowflake with 0% or 1% decommissions.

The last thing to note on this chart is the meaningful uptick in survey respondents citing Snowflake – up to 212 in the survey from a previous figure of 165. Remember this is a random survey across ETR’s panel of 5,000 global customers. There is a North America bias but the data confirms Snowflake’s continued penetration into the market despite facing economic headwinds and stiff competition.

That said, it’s hard to imagine that Snowflake doesn’t feel the softening in the market like everyone else. Snowflake is guiding for around 60% growth in product revenue for the most recent quarter which ended on 10/31. This comes against a tough compare to the quarter a year ago. Snowflake is guiding 2% operating margins. Like every company, the reaction of the street will come down to how accurate or conservative the guide is, how consensus interpreted that guide and ultimately what customers consumed in the quarter.

How Snowflake’s Hybrid Consumption Pricing Model Translates

To reiterate, it’s our understanding that Snowflake customers have a committed spend over the period of time (the term). And they can choose to delay that spend at their discretion and dial down usage to optimize cloud costs during the term. That’s clearly happening broadly in the market. Cloud optimization is the second most commonly cited cost reduction technique in the ETR surveys (behind consolidating redundant vendors). This trend will cause lumpiness in the cloud numbers. Further, it is our understanding that this committed spend can be rolled into future credits after the initial term, as long as the customer renews their contract with Snowflake.

It’s a longer topic that we’ll dig into in a future Breaking Analysis. But the data shows customers are mixed in their preference between consumption and subscription models. Most customers in a recent ETR Drill Down survey (N=300 cloud customers) either prefer or are required to choose subscription models (48%). Twenty-nine percent (29%) prefer or are required to choose consumption-based models, with the balance indifferent.

The point is Snowflake has a hybrid model in the sense that you can dial up or down your usage (consumption) but you’re committed to a level of spend that’s negotiated (subscription). The more you spend the better the price per credit.

Streamlit is Showing Real Momentum

Earlier this year Snowflake acquired a company called Streamlit for around $800M. Streamlit is an open-source Python library that makes it easier to build data apps with machine learning. And like Snowflake generally, its focus is on simplifying the complex – in this case making data science easier to integrate into data apps that business people can use. Snowflake made a number of related announcements this past week including native python support.

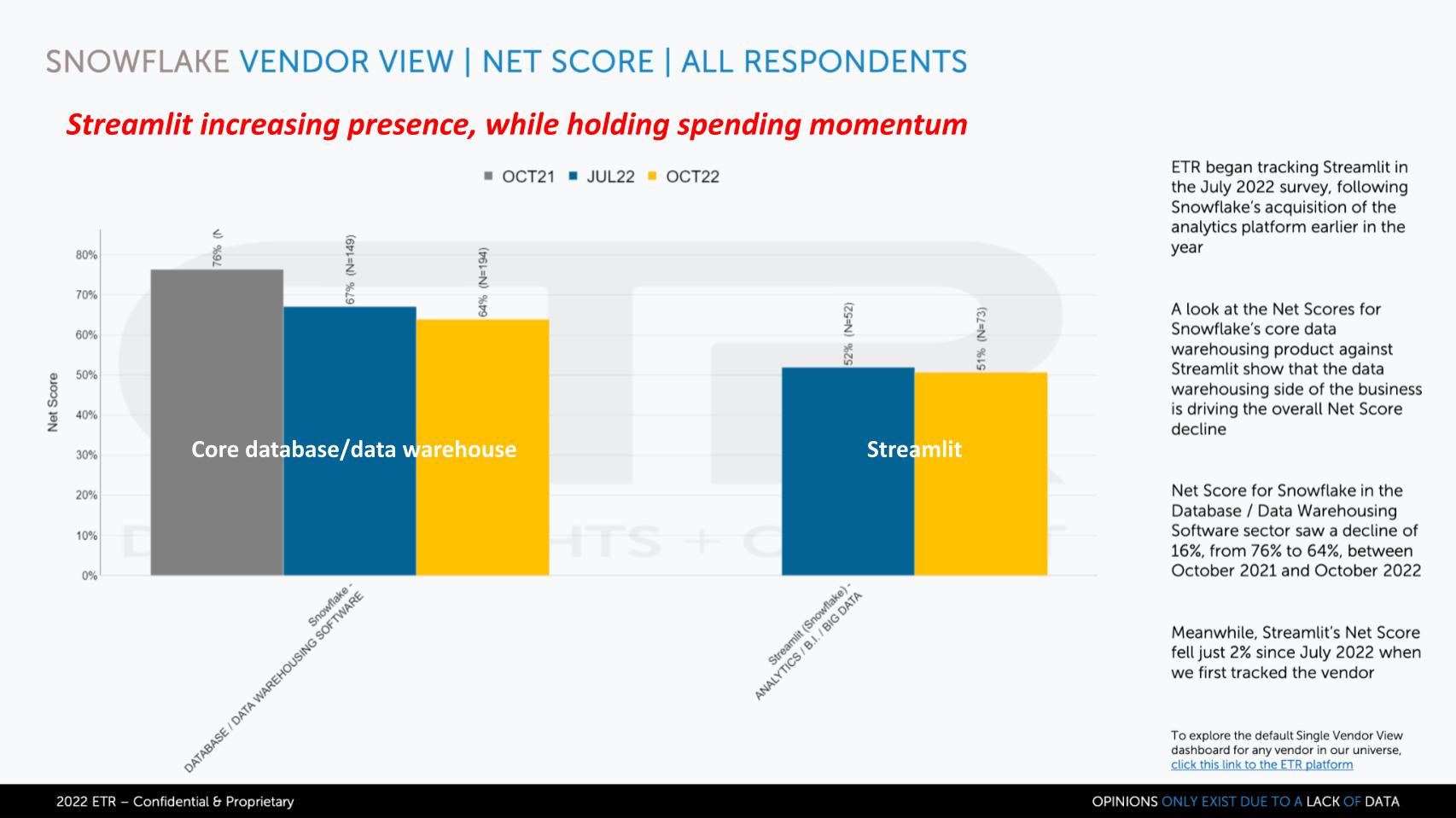

We were excited this summer to see some meaningful data on Streamlitin the July ETR survey, which we’re showing below in comparison to Snowflake’s core business.

In the chart above we show Net Score over time for Snowflake’s core database/data warehouse offering as compared to Streamlit. Snowflake’s core product had 194 responses in the October ‘22 survey. Streamlit had an N of 73, up noticeably from 52 in the July survey. It’s hard to see but Streamlit’s Net Score stayed pretty constant at 51%, while core Snowflake came down to 64% – both well over the magic 40% mark.

There are two key points here:

- The Streamlit acquisition seems to be paying off really quickly, opening new opportunities for Snowflake. Streamlit is gaining exposure right out of the gate as evidenced by the larger number of responses in the ETR survey;

- The spending momentum, while lower than Snowflake overall, is very healthy and steady.

The Streamlit acquisition expands Snowflake’s TAM and is a critical chess piece in attracting developers. We’ll dig more into this topic later in the post.

Comparing some Key Data Platforms

In previous Breaking Analysis segments we’ve assessed various competitive angles with a number of analysts. Snowflake is moving from its enterprise analytics roots into the realm of data science while Databricks is coming at the traditional data warehouse / analytics market from its stronghold position as a data science platform. Streamlit extends Snowflake’s moves into Databricks’ domain. Meanwhile, Databricks is behind a number of open projects – e.g. Delta Sharing that are designed to substantially replicate Snowflake’s value proposition.

And these two players are far from the only two going after the prize.

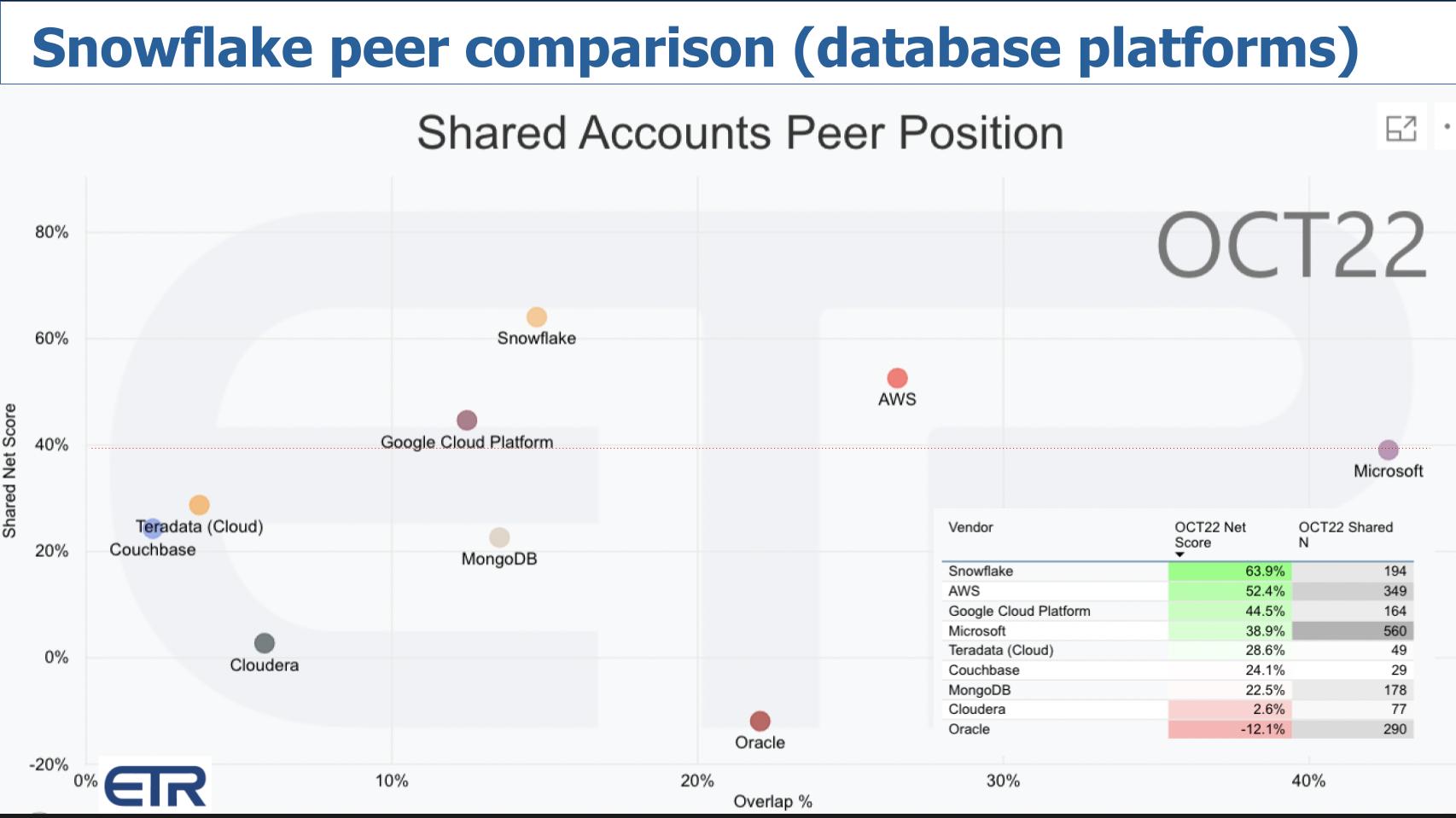

The chart above shows Net Score or spending velocity on the Y axis and Overlap or presence in the data set on the X axis. The red line at 40% represents a highly elevated Net Score. And the table insert informs as to how the companies are plotted – Net Score Y and Shared Ns X.

Here we comparing a number of database players purposely excluding AWS, Microsoft and Google (for now). We include Oracle for reference because it is the king of database. Note that the Oracle data includes Oracle’s entire portfolio (including apps) but we have it here for context.

What are the key takeaways?

- Right off the bat, Snowflake jumps out with a Net score of 64%;

- Streamlit, separated out from Snowflake core, sits right on top of Databricks;

- Only Snowflake, Streamlit and Databricks in this selection are above the 40% level;

- Mongo and Couchbase are solid and Teradata Cloud is showing meaningful progress in the market.

A Deeper Look at Database Platforms

In the previous chart we compared companies that primarily sell database solutions but we included other parts of their portfolio. Below we bring in the three US-based cloud players and isolate the data only on the database/data platform sector.

For this analysis we have the same XY dimensions but we’ve added in the database-only responses for AWS, Microsoft and Google. Notice that those three plus Snowflake are just at or above the 40% line. Snowflake continues to lead in spending momentum and keeps creeping to the right.

Now here’s an interesting tidbit. Snowflake is often asked how they compete with AWS, Microsoft and Google who offer Redshift, Synapse and BigQuery. Snowflake has been telling the street that 80% of its business comes from AWS. And when Microsoft heard that they said “whoa wait a minute let’s partner up Snowflake.” That’s because Microsoft is smart and they understand that the market is enormous. If they expand their partnership with Snowflake: 1) They may steal some business from AWS; and 2) Even if Snowflake is winning over Microsoft in database…if it wins on Azure, Microsoft sells more compute, more storage, more AI tools and more other stuff.

AWS is really aggressive from a partnering standpoint with Snowflake, constantly negotiating better prices. It understands that, when it comes to data, the cheaper you make it to process and store, the more people will consume. Scale economies and operating leverage are really powerful things at volume. While Microsoft is coming along in its Snowflake partnership, Google seems resistant to that type of go to market partnership.

Google’s Messaging of the “Open Data Cloud”

Rather than lean into Snowflake as a go to market partner and make pricing attractive for Snowflake customers, Google’s field force is fighting fashion. Google itself at Cloud Next heavily messaged what they called the “Open Data Cloud.” A direct ripoff from Snowflake.

What can we say about Google? They continue to be behind when it comes to enterprise selling.

Snowflake’s De-positioning of the Competition

Just a brief aside on competitive posture. We’ve observed Snowflake CEO Frank Slootman in action with his prior companies and the way he convincingly and passionately de-positions the competition. At Data Domain he eviscerated Avamar (owned by EMC) with its expensive and slow post process architecture. At one point we recall him actually calling it “garbage” (at least that’s our memory). EMC ended up acquiring Data Domain.

We saw Slootman absolutely destroy BMC when he was at ServiceNow. Alluding to the IT departments supported by BMC Remedy (the competitive product) as the equivalent of the department of motor vehicles.

So it’s interesting to hear how Snowflake today openly talks about the data platforms of AWS, Microsoft, Google and Databricks.

Here’s the bumper sticker, sifting through Snowflake’s commentary:

- Redshift is an on-prem database that AWS morphed to the cloud;

- Microsoft sells a collection of legacy databases also morphed to run in the cloud;

- Even BigQuery, which is considered cloud native, is positioned by Snowflake as originally an on-prem database designed to support Google search;

- Databricks is for those people smart enough to get into Berkeley who love complexity. Snowflake doesn’t mention Berkeley as far as we know – that’s our addition. But you get the point.

The Evolution of Snowflake & Databricks: From Partners to Competitors

The interesting thing about Databricks and Snowflake is the relationship used to be much less contentious. Last decade on theCUBE we said a new workload type is emerging around data where you have AWS cloud + Snowflake + Databricks data science. And we felt at the time that this would usher in a new vector of growth for data. And it has. But we now see the aspirations of all three of these platforms colliding. It’s an interesting dynamic. Especially when you see both Snowflake and Databricks putting venture money and getting their hooks into the loyalties of the same companies like dbt labs and Alation.

At any rate…Snowflake’s posture is “we are the pioneer in cloud native data warehouse, data sharing and data apps. Our platform is designed for business people who want simplicity. The other guys are formidable but we (Snowflake) have an architectural lead…and, unlike the big cloud players, we run in multiple clouds.”

It’s pretty strong positioning – or de-positioning – you have to admit. Not sure we agree with the BigQuery competitive knockoffs – that’s a bit of a stretch – but Snowflake as we see in the ETR survey data is winning.

Snowflake’s Third Wave

In thinking about the longer term future. Let’s talk about what’s different with Snowflake, where it’s headed and what the opportunities are for the company’s future. And specifically address the $10B goal.

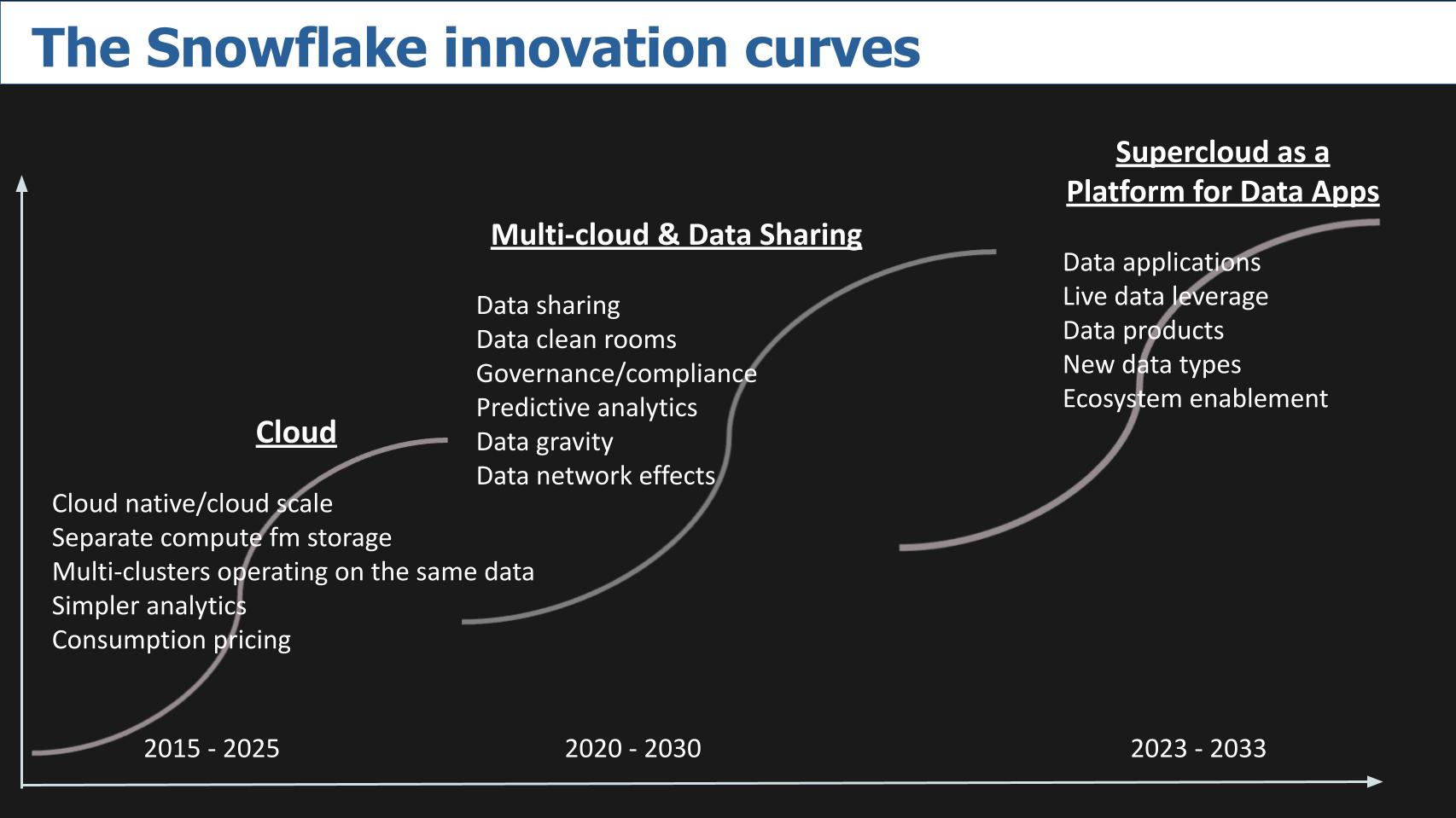

Snowflake in our view is riding three major decade-long waves. We describe our perspective below.

Act 1: Cloud Native Data Warehouse 2015 – 2025

Snowflake put itself on the map by focusing on simplifying data analytics. What’s interesting about the company is the founders are, as you probably know, from Oracle. And rather than focusing on transactional data, Oracle’s strong suit, the founders said we’re going to attack the data warehousing problem. And they completely re-imagined the database and what was possible with virtually unlimited compute and storage capacity. Of course Snowflake became famous for separating compute from storage; being able to shut down the compute so you didn’t have to pay for it. The ability to have multiple clusters hit the same data without making endless copies. And a consumption/cloud pricing model. Then everyone on the planet realized what a good idea this was and venture capitalists in Silicon Valley have been funding companies to copy that move. And that’s pretty much become table stakes today.

But we would argue that Snowflake not only had the lead but when you look at how others approach this problem it’s not as clean and elegant as Snowflake’s built from scratch implementation. AWS is a good example. It’s version of separating compute from storage was an afterthought. While it’s good and customers like the feature, it’s more of a tiering to lower cost storage solution as opposed to a true separation from compute.

Having said that we’re talking about competitors like AWS with lots of resources and cohort offerings and so we don’t want to make this all about the product nuances. All things being equal though, architecture matters and Snowflake gets some props for its well-thought-out approach.

So that’s the cloud S-Curve and Snowflake is still on that curve. In and of itself it has legs but it’s not what is going to power the company to $10B.

Act 2: Multicloud & Data Sharing 2020 – 2030

The next S-Curve we denote is the Multi-cloud & Data Sharing curve in the middle. While 80% of Snowflake’s revenue comes from AWS, Microsoft is ramping and Google…well we’ll see. But getting traction in multiple clouds is a differentiator for Snowflake relative to the public cloud players. Snowflake’s global instance that spans multiple regions and multiple clouds sets it up for the third wave. For now, making a consistent experience irrespective of which cloud you’re running in is an important step to simplify data management.

But the more interesting part of that curve is data sharing and this idea of data clean rooms. And this is all about network effects and data gravity and you’re seeing this play out today. Especially in regulated industries like financial services and increasingly healthcare and government. Highly regulated verticals where folks are super paranoid about compliance. And what Snowflake has done is tell customers – put all the data into our data cloud and we’ll make sure its governed. Of course that triggers a lot of people because Snowflake is a walled garden. That’s the tradeoff – it’s not the wild west – it’s not Windows – it’s Mac. It’s more controlled. Both models can thrive.

But the idea is that as different parts of an organization or even partners begin to share data, they need it to be governed, secure and compliant. Snowflake has introduced the idea of stable edges. It’s a metric they track. Our understanding is a stable edge – or persistent edge – is a relationship between two parties that lasts some period of time – like more than a month. It’s not a one and done type of data sharing, it has longevity to it. And that metric is growing at more than 100% per quarter.

Around 20% of Snowflake customers are actively sharing data and the company tracks the number of persistent edge relationships within that base. So that’s something that is unique because again, most data sharing is all about making copies of data. Great for storage companies…bad for auditors and compliance officers.

This data sharing trend is just starting to hit the base of the steep part of the S-curve and we think will have legs through this decade.

Act 3: Supercloud as a Platform to Build Data Apps 2023 – 2033

Finally the third wave we show above is what we call Supercloud…the idea that Snowflake is offering a PaaS layer that’s purpose-built for a specific objective. In this case, building data apps that are cloud native, shareable and governed. This is a long term trend that will take some time to develop in our opinion. Adoption of application development platforms can take 5 to 10 years to mature and gain significant traction.

But this is a critical play for Snowflake. If it is going to compete with the big cloud players it has to have an app dev framework like Snowpark. It has to accommodate new data types like transactional data – that’s why it announced Unistore last June.

The pattern that’s forming here is Snowflake is building layer upon layer with its architecture at the core. It’s not (currently anyway) going out and buying revenue to instantly show growth in new markets to contribute to its $10B goal. Rather, the company is buying firms with tech that complements Snowflake, fits into the data cloud that the company can organically turn into revenue for growth.

Is $10B Achievable? Is it too Conservative?

As to the company’s goal to hit $10B by FY 2028. Is that achievable? We think so. With the momentum, resources, go to market, product and management prowess that Snowflake has, yes. One could argue $10B is too conservative. Indeed, Snowflake CFO Mike Scarpelli will fully admit his forecasts are built on existing offerings. He’s not including revenue from all the new tech in the pipeline because he doesn’t have data on its adoption rates just yet. So unless Snowflake’s pipeline fails to produce, that milestone is likely beatable.

Now of course things can change quite dramatically. It’s possible that Scarpelli’s forecasts for existing businesses don’t materialize. Or competition picks them off or a company like Databricks actually is able to, in the longer term, replicate the functionality of Snowflake with open source technologies, which would be a very competitive source of innovation.

But in our view – there’s plenty of room for growth. The markets for data are enormous. The challenges with data silos have been well documented. The real key is can and will Snowflake deliver on the promises of simplifying data. We’ve heard this before from data warehouses to data marts and data lakes and MDM and ETLs and data movers and data copiers and Hadoop and a raft of technologies that have not lived up to expectations.

At the same time, we’ve also seen some tremendous successes in the software business with the likes of ServiceNow and Salesforce. So will Snowflake be the next great software name and hit the $10B mark.

We think so. Let’s reconnect in 2028 and see. Or if you have an opinion, let us know.

Keep in Touch

Thanks to Alex Myerson and Ken Shifman who are on production, podcasts and media workflows for Breaking Analysis. Special thanks to Kristen Martin and Cheryl Knight who help us keep our community informed and get the word out. And to Rob Hof, our EiC at SiliconANGLE.

Remember we publish each week on Wikibon and SiliconANGLE. These episodes are all available as podcasts wherever you listen.

Email david.vellante@siliconangle.com | DM @dvellante on Twitter | Comment on our LinkedIn posts.

Also, check out this ETR Tutorial we created, which explains the spending methodology in more detail.

Watch the full video analysis:

Note: ETR is a separate company from Wikibon and SiliconANGLE. If you would like to cite or republish any of the company’s data, or inquire about its services, please contact ETR at legal@etr.ai.

All statements made regarding companies or securities are strictly beliefs, points of view and opinions held by SiliconANGLE Media, Enterprise Technology Research, other guests on theCUBE and guest writers. Such statements are not recommendations by these individuals to buy, sell or hold any security. The content presented does not constitute investment advice and should not be used as the basis for any investment decision. You and only you are responsible for your investment decisions.

Disclosure: Many of the companies cited in Breaking Analysis are sponsors of theCUBE and/or clients of Wikibon. None of these firms or other companies have any editorial control over or advanced viewing of what’s published in Breaking Analysis.